Assassin's Creed Valhalla - what PC hardware is needed to match PS5 visuals?

And which settings get the best from your rig?

With the arrival of the next console generation, it's inevitable that the hardware requirements for PC software will rise as graphical quality and complexity increases. The baseline is reset with the arrival of Xbox Series X and PlayStation 5, and we wanted to get an outline of what kind of PC graphics kit is required to match or even exceed console hardware. To do this, we broke down the visual make-up of Assassin's Creed Valhalla, matching PS5 and PC in terms of quality settings - getting a good grip on optimised settings in the process, where we measure the bang for the buck of every preset and suggest the most optimal settings for PC users.

First of all, it's worth pointing out that we may well see very different results for very different games. In assessing Watch Dogs Legion, I came to the conclusion that Xbox Series X could be matched by a PC running an Nvidia RTX 2060 Super - mostly owing to the onerous demands of ray tracing, an area where GeForce hardware has a clear advantage. With Assassin's Creed Valhalla, we see something very different. First of all, the game doesn't seem to run that well on Nvidia kit, and there's no RT in use, nullifying a key GeForce advantage. Meanwhile, AMD seems to fare significantly better. By our reckoning a Radeon RX 5700XT should get very close to the PS5 experience.

It's worth pointing out that some of this comparison work is theoretical as there are no like-for-like settings between consoles and PC. For example, the dynamic resolution scaling system is very different. PS5 spends most of its time between 1440p and 1728p in our pixel count measurements, with many areas and cutscenes locked to 1440p. PC is different - bizarrely perhaps, the anti-aliasing system is also the DRS system, with the adaptive setting giving between 85 per cent to 100 per cent of resolution on each axis, according to load. Put simply, PC has a lower DRS window. So to get an idea of relative performance between PC and consoles, I used an area of the game that drops beneath 60fps on PlayStation 5, and does so while rendering at 1440p resolution.

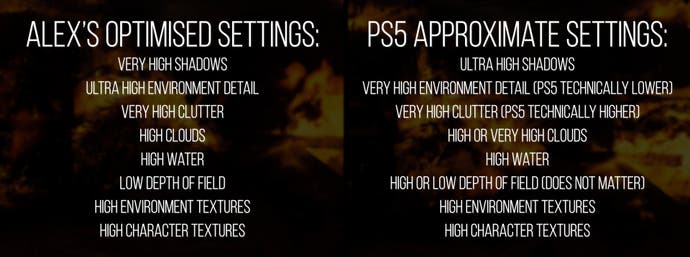

So, what are the PC equivalent settings used on PlayStation 5? You can see my process in the video directly above, but essentially it starts with the ultra high setting shadows, very high for world detail, and what could be ultra high, very high or high for Assassin's Creed's expensive volumetric clouds setting (all look more or less identical where they can be directly compared). Meanwhile, perhaps unsurprisingly bearing in mind their prodigious memory allocations, the consoles use max quality textures, while the water setting is closest to PC's high.

So far, so good, but this is where things get a little trickier. The clutter option actually increases the density of foliage, to the point where I found that PlayStation 5's presentation actually exceeds PC's very high maximum, with even denser vegetation in my test scene. This is one of the very few settings on PC without an ultra high equivalent, so my guess is that this is a developer oversight. This setting has a very low impact on performance - with just a four per cent difference between very high and low although they look worlds apart, which is something we'll address later: the lack of scalability in the PC version of the game.

There are other inconsistencies too. For one, all the cloth physics in the game run at a sub-native frame-rate on PlayStation 5: 30fps or even lower. On highest PC settings, you'll get full native frame-rate, and you only get something similar if adjust the environmental detail setting down to medium. So essentially, we lack the granularity in the settings to get an exact console to PC match across the board. Also there doesn't seem to be an exact match in the quality of fire rendering which appears to run at full resolution on PC, but much lower on PS5. But with that said, there are still some intriguing comparisons and conclusions we can draw.

In the end, it's clear that this is a very demanding game on PC but what stood out most to me was the lack of scalability - some settings like depth of field don't appear to actually do anything, while the dynamic resolution scaling option is arbitrarily limited and lacks utility. There are some other annoyances too: tessellation quality can't be scaled up, so even at the highest setting, terrain visibly deforms right in front of you, something that happens on all platforms. The second conclusion is that the relatively low resolution on PlayStation 5 makes sense since it is operating with most of the PC settings maxed out.

Choosing a particular stress point on PlayStation 5 - which drops beneath 60fps and hits the minimum 1440p resolution - I could run the PC version fixed at 1440p with as close to equivalent settings as possible. And here's where we see the Nvidia vs AMD divide in action. First of all, RTX 2060 Super is 20 per cent slower than PlayStation 5, dropping to 10 per cent with an RTX 2070 Super. Based on tests with a 2080 Ti, it looks like a 2080 Super or RTX 3060 Ti would be required to match or exceed PlayStation 5's output. However, based on my tests with a Navi-based RX 5700, I'd expect a 5700 XT to get within striking distance of the console's throughput. This assumes a very high clouds preset - performance does improve if you drop down to high.

Looking at the overall wins delivered by my optimised settings, the scalability of the game is disappointing. Dropping from ultra high across the board to my selected presets only saw performance increase by 14 per cent on an RTX 2060 Super running at 1440p. Really, the biggest gain can be seen by turning on the adaptive resolution setting which increases optimised settings performance over ultra to around 28 per cent. But once again, the DRS solution is lacking - the resolution shift is not flexible enough to keep you at 60fps in many scenarios, limiting its effectiveness.

All told, perhaps Assassin's Creed Valhalla isn't the best way to compare consoles and PC, especially bearing in mind the disparity in performance between AMD and Nvidia GPUs, but it's certainly an interesting data point. It certainly emphasises that despite the relatively high prices, console users are getting a great deal - when PS5 and Xbox One launched back in 2013, a £100 graphics card could match the console experience, for a while at least. Fast forward seven years on, and you're looking at much more expensive PC parts required to reach console parity - let alone exceed it.