Hands-on with AMD FSR 3 frame generation - taking the fight to DLSS 3

Image quality looks good, but this is far from the finished article.

Last Friday, AMD finally released FSR 3 frame generation, its answer to Nvidia's DLSS 3. Two titles had supported added: Forspoken and Immortals of Aveum. We'd already seen demos of both games under controlled conditions at Gamescom back in August, but this was our first chance to go hands-on and to truly put FSR 3 through its paces. The verdict? Image quality in terms of generated frames is impressive, but elsewhere there are some fundamental issues AMD needs to address.

Let's quickly review what frame generation is about. Nvidia kicked this all off with DLSS 3, and in many respects, FSR 3 follows the exact same principles. The next frame is rendered and the one beyond that too, then via a combination of optical flow analysis informed via inputs from the game engine - such as motion vectors, for example - an intermediate frame is generated that slots in between them. Frame-rate then typically receives an extraordinary boost - in my tests with FSR3 in Immortals of Aveum on an RX 7900 XTX at 4K resolution, it's a 71 percent boost compared to standard rendering.

I'm careful in using words to describe the frame-rate uplift, because similar to DLSS 3, I don't think you can call it 'extra performance' as such, even though both Nvidia and AMD will likely use that term. The game itself is still performing as it was without frame generation and in fact, the extra calculations required to generate the intermediate frame have a cost of their own, so one might even argue that frame generation reduces performance.

The output, however, is visibly much smoother. It looks like extra performance but it may not feel like that because buffering up that extra frame incurs latency and adds to the response time as you play. Nvidia uses its Reflex technology to claw back as much latency as possible, while AMD has its own AntiLag and AntiLag+ technology. Ideally you'd be looking for the response time with frame generation to be the same as it is without.

While FSR 3 is very similar indeed to DLSS 3 in principle, there are some differences. Firstly, FSR 3 is cross-vendor. AMD has recommended specs but it's basically a compute shader so if the game runs on your PC, FSR 3 should run too. The issue is that AntiLag and AntiLag+ latency mitigation tech are AMD-only, but you should be able to use Nvidia Reflex if you have a GeForce card. Thanks to AMD, older Nvidia cards now have a frame-gen option which is pretty great really isn't it?

The next difference is that DLSS 3 can run frame generation from any input - so native resolution, DLSS, XeSS, even FSR 2. It'll generate interpolated frames from any kind of base imagery you give it. AMD's FSR3 is not so flexible, only working with FSR2 upscaling. Finally, DLSS 3 doesn't officially support v-sync but does work with VRR displays and also supports v-sync off. In our tests, FSR 3 does not work with VRR, while v-sync off is completely broken. On the latter point, the firm says that we're looking at non-final code, while its statement to us suggests that VRR does work, but I would take issue with that.

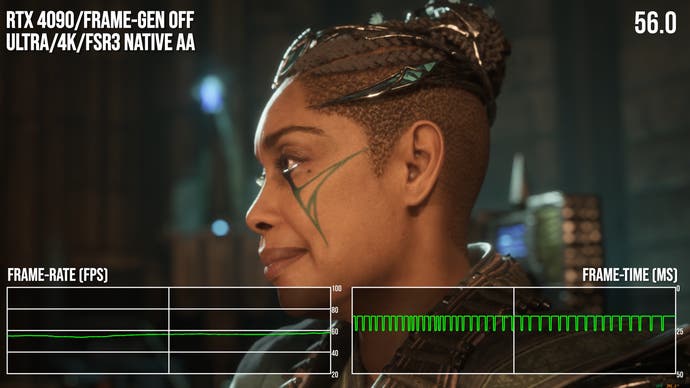

In terms of frame-rate boosts, the 71 percent improvement I saw in a benchmark scene from Immortals of Aveum is impressive, while the more capable RTX 4090 saw a similarly impressive uplift: 67 percent. That's with v-sync on testing at 4K on ultra settings in native AA mode - where FSR2 is not used for upscaling, simply for anti-aliasing. Looking at frame-pacing though, there's a problem. The further off you are from your display refresh rate, the more inconsistent frame delivery becomes. This, combined with the fact that VRR does not work, means that you need to turn on frame-gen then target your settings to get as close to your display refresh rate as you possibly can.

It's usually the case that we'd benchmark with v-sync off - and on paper at least, I saw similar frame-rate uplifts. However, when v-sync is disabled, FSR 3 frame-gen only seems to display generated frames for less than a millisecond - it presents as a tiny 'bar', very reminiscent of the 'runt frames' from the multi-GPU tests from back in the day.

In terms of presented frames you actually see, there are actually fewer of them with v-sync off compared to simply turning off frame generation. AMD says that this is a function of early code being used in Immortals of Aveum and Forspoken. Ultimately though, FSR 3 is v-sync or bust and even when working, frame-pacing under load is not where it should be.

Comparing FSR 3 frame-gen to its DLSS 3 equivalent is challenging for two reasons. Firstly FSR 3 requires FSR 2 to function, meaning you can't feed it native resolution frames or frames produced via other upscalers in the way that you can with DLSS 3. Meanwhile, it seems that Ascendant Studio - the developer of Immortals of Aveum - has prevented from DLSS 3 frame generation from working with FSR 2 frames, meaning like for like testing is not possible. The quality of AMD's frame generation is actually quite good - it does look worse than DLSS 3, but only because of the FSR 2 upscaling not matching the quality of the DLSS 2 upscaling you're forced to use.

I did some very quick latency testing in Immortals of Aveum and I used Nvidia Frameview to get the PC Latency metrics as AMD's own latency monitor didn't seem to work with the game. Thankfully, Aveum supports Nvidia Reflex, meaning that markers are baked into the game allowing for latency to be measured even on AMD or Intel GPUs. Those markers are active even when Reflex isn't used. Here are my results in handy table form, based on tests on an RX 7900 XTX running the game at 4K resolution and ultra settings in FSR 3 quality mode.

| Nvidia Frameview | Frame-Gen Off | Frame-Gen On |

|---|---|---|

| No Anti-Lag | 55.7ms | 64.5ms |

| Anti-Lag | 50.5ms | 60.7ms |

| Anti-Lag+ | 27.5ms | 62.5ms |

With basic AntiLag in play, essentially 4-5ms are shaved off both frame-gen on and frame-gen off results, so effectively we're in the same ballpark, but the reduction is quantifiable. AntiLag+ has a tremendous affect on Immortals of Aveum, shaving off around 28ms (!) when frame generation is disabled. However, it doesn't seem to do anything at all with frame generation in effect, which is a real shame. Still, a circa 10ms addition to input lag when enabling FSR 3 frame generation is not too bad and I doubt most people would be able to tell the difference. AntiLag+ without frame-gen though? That's a big improvement.

UPDATE 10/10/23: After publication of this article, Nvidia got in touch, suggesting that its Frameview software needs to an update to accurately measure PC Latency with FSR 3 frame-gen. Nvidia recommended measuring using LDAT, an external latency measurement tool that tracks button to pixel input lag. It relies on using the mouse button to activate the beginning of the latency measurement (firing a weapon), which is a problem in replicating the test above, where weapons are inactive.

The LDAT test below is taken after the two cutscenes for chapter three are complete, which is a much heavier scene than the test above, so input lag across the board is higher as frame-rate is lower. With no Anti-Lag active, the delta between between frame-gen on and off is 10.4ms, so much the same as the test above. With Anti-Lag on, the slight reduction with frame-gen off is retained in this test, but this time there's no reduction at all with frame-gen. Finally, with Anti-Lag+, there's the same 28ms reduction in lag with frame-gen off, but once again, frame generation still seems to have much the same latency as disabling all latency mitigation techniques.

| Nvidia LDAT | Frame-Gen Off | Frame-Gen On |

|---|---|---|

| No Anti-Lag | 96.9ms | 107.3ms |

| Anti-Lag | 93.5ms | 108.4ms |

| Anti-Lag+ | 67.8ms | 106.8ms |

Summing up FSR 3, there's the sense that we've got two significant wins here. First of all, without any hardware-based optical flow analyser, AMD has managed to get results comparable to DLSS 3. How close? We don't know, as we can't feed both frame generators with the same images. Image quality is certainly comparable to DLSS 3. The other major win is that you are getting the frame-rate uplift you'd expect.

However, AMD has a lot of work to do and I'm not sure FSR 3 should have launched quite yet. Frame generation is great in taking a lower performing game and propelling frame-rates into high refresh rate territory. However, you need VRR to smooth off the experience and that simply doesn't work yet. Meanwhile, v-sync works but the judder artefacts are no longer welcome in the PC gaming space at a time where virtually every monitor - and most TVs - ship with some kind of VRR functionality. Frame-pacing under heavy load is clearly problematic. The only way to get a good experience is to tweak settings to ensure you get as close as possible to your display refresh rate as possible, whereas DLSS 3 just quietly gets the job done as expected, letting VRR do the heavy lifting where required in demanding scenes.

DLSS 3 itself launched with problems, of course, many of which were overcome given time. Hopefully, we'll see AMD address the key concerns surrounding FSR 3 frame generation. There's promise here, but it's some way off being the finished article.