Eyes-on with PC Shadow of Mordor's 6GB ultra-HD textures

UPDATE: Video added comparing PS4 with the PC game running ultra textures.

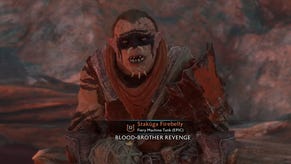

The PC version of Monolith's Shadow of Mordor features an optional, ultra-HD texture pack that requires a graphics card with a colossal 6GB of memory for best performance. It's an option that restricts the game's absolute high-end experience to a tiny minority of PC gamers - so the question is, to what extent are the graphics compromised for everyone else? And how does the console version fit in?

First of all, it's worth pointing out that as of this writing, actually gaining access to the texture pack itself is an involved, convoluted procedure that we only discovered thanks to the legwork done by German site, PCHardware.de. You need to access this Steam URL, hit the launch or install buttons, then when it errors out, head into your Steam client, right-click on Shadow of Mordor in your Steam library, select DLC, tick the HD texture pack and then force an update (or verify the files). If there are any problems with the last part, restarting the Steam client should sort it out. The optional texture pack is a 3.7GB download. UPDATE 2/10/14 7:39am: There's a direct link to the patch available and you can get it here.

Curiously, the option for ultra textures is still present in the game, even without the pack installed - it simply defaults to high quality art instead. Graphics cards with 6GB of RAM are few and far between - limited edition versions of cards like the Radeon HD 7970, R9 290X, GTX 780 and 780 Ti were released with the requisite RAM, but we suspect that the majority of people able to utilise the high-end art in Shadow of Mordor will be owners of Nvidia's GTX Titan series GPUs - exactly what we used for our comparison.

So without further ado, we present a selection of comparisons of the game's opening scenes, captured at medium, high and ultra texture settings with all other settings ramped up as high as they go. Monolith recommends a 6GB GPU for the highest possible quality level - and we found that at both 1080p and 2560x1440 resolutions, the game's art ate up between 5.4 to 5.6GB of onboard GDDR5. Meanwhile, the high setting utilises 2.8GB to 3GB, while medium is designed for the majority of gaming GPUs out there, occupying around 1.8GB of video RAM.

The question is, just how much of a difference is there between the various quality settings? Well, it's safe to say that ultra textures definitely make an impact - it's just that the scenarios in which you will actually notice during gameplay are likely to be fairly limited. Shadow of Mordor is a third-person title, so the camera is set back somewhat, diminishing the difference in many cases - unless you walk right up to a wall, for example. For the most part, we found that the high setting - in itself placing a significant video RAM requirement on the user - offers the bulk of the ultra experience.

It's at the medium quality setting where things start to become noticeable. Ground textures in particular look significantly blurrier compared to the high and ultra modes, and incidental detail can look rather blocky. Most of the attention to the game's visuals has been dedicated to the outrageous requirements of the ultra texture mode, but perhaps the real story here is how the lion's share of gaming GPUs have 2GB of memory - and that plants them firmly in medium quality territory. We're still looking at the console builds, but an initial comparison with PS4 suggests that it sits alongside the PC's high quality setting.

So just why does the ultra texture setting exist at all when so few PC owners can actually use it without a performance penalty? That's the question that Eurogamer's video editor Ian Higton put to the game's lead designer, Bob Roberts, at EGX last week.

"To make a world as rich and detailed, especially the characters - we put so much into the enemies with the nemesis system and everything - our artists are making things at an outrageously high fidelity," he said.

"They are on monster PCs making the highest possible quality stuff and then we find ways to optimise it, to fit onto next-gen, to fit onto PCs at high-end specs. Then obviously there's going to be that boundary where our monster development PCs are running it OK - but why not give people the option to crank it up? It makes sense to get it out into the world there - we have it, we built it that way to look as good as possible. You might as well, right?"

One thing we should point out is that it is perfectly possible to run higher-quality artwork on lower-capacity graphics cards. However, you quickly fall foul of the split-memory architecture of the PC. On Xbox One and PS4, the available memory is unified in one address space, meaning instant access to everything. On PC, memory is typically split between system DDR3, and the graphics card's onboard GDDR5. Running high or ultra graphics on a 2GB card sees artwork swapping between the two memory pools, creating stutter. Shadow of Mordor has an optional 30fps cap incorporated into its options, though - with a 2GB GTX 760, we could run the game at ultra settings with high quality textures and frame-rate was pretty much locked at the target 30fps with only very minor stutter. In short, there's a way forward for those using 2GB cards, but it does involve locking frame-rate at the console standard - and the ultra textures didn't play nicely with the card, even at 30fps.

From our perspective, there are two ways of looking at this memory-devouring optional mode. On the one hand, it's a good thing that developers are making all of their assets available to the userbase - and it's interesting to see that art is already being mastered at quality levels beyond the PS4/Xbox One console standard. On the other, the mere existence of this texture pack has led to concern that even high-end, cutting-edge GPUs like the GTX 970 and GTX 980 are already obsolete - even though, based on our initial testing, the hit to the experience isn't really an issue.

Indeed, it's actually the compromises made to accommodate 2GB graphics cards that are more concerning. The game still looks good, but in certain areas, console is a cut above - unless you kick in the frame-rate limiter. We saw a similar story with Titanfall: Respawn's debut required a 3GB graphics card to match the texture quality found in the Xbox One version of the game. That being the case, the recent discounts found on the 3GB Radeon R9 280 start to look compelling, especially as its replacement, the R9 285, only has 2GB of RAM in its standard configuration.

We've just received the console versions of the game - look out for a PS4/Xbox One/PC Face-Off later this week.