Tech Interview: Metro Exodus, ray tracing and the 4A Engine's open world upgrades

Tomorrow's technology today.

Remember the days when key technological innovations in gaming debuted on PC? The rise of multi-platform development and the arrival of PC technology in the current generation of consoles has witnessed a profound shift. Now, more than ever, PlayStation and Xbox technology defines the baseline of a visual experience, with upgrade vectors on PC somewhat limited - often coming down to resolution and frame-rate upgrades. However, the arrival of real-time ray tracing PC technology is a game-changer, and 4A Games' Metro Exodus delivers one of the most exciting, forward-looking games we've seen for a long, long time. It's a title that's excellent on consoles, but presents a genuinely game-changing visual experience on the latest PC hardware.

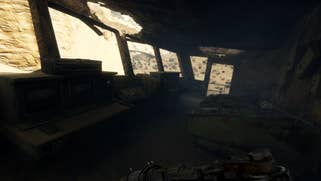

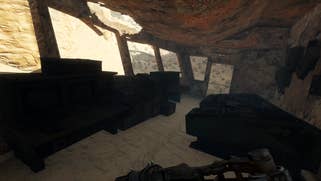

The game is fascinating on many levels. First of all, as we approach the tail-end of this console generation, it's actually the first title built from the ground up for current-gen hardware from 4A Games - genuine pioneers in graphics technology. It also sees 4A transition from a traditional linear-style route through its games to a more open world style of gameplay, though the narrative element is much more defined, and missions can be approached in a much more Crysis-like way. Think of it more as a kind of 'wide' level design, as opposed to an Ubisoft-style, icon-filled sandbox. Regardless, this transition requires a massive rethink in the way that the world of Metro is rendered and lit, while at the same time maintaining the extreme detail seen in previous Metro titles. And remember, all of this has to work not just on the latest and greatest PCs and enhanced consoles, but on base Xbox and PlayStation hardware too.

And then there's the more forward-looking, next generation features within the game. Real-time ray tracing is now possible on PCs equipped with Nvidia RTX graphics cards, and while what we saw at Gamescom was highly impressive, we were looking at 4A Games' very earliest implementation of ray tracing, with frame-rates at 1080p dipping beneath 60 frames per second on the top-end RTX 2080 Ti. And this raises an obvious question - how would lesser cards cope? The answer comes down to 4A revising its RT implementation, revamping the technology to deliver equivalent results to its stunning ray traced global illumination solution, but doing so in such a way that allows for all of the RTX family of GPUs to deliver good results.

All of which is to say that as we waited for Metro Exodus review code to arrive, Digital Foundry had a lot of questions about the directions 4A has taken with its latest project, how its engine has been enhanced and upgraded since we last saw it in the Metro Redux titles and of course, how it has delivered and optimised one of the most beautiful real-time ray tracing implementations we've seen. Answering our questions in depth are 4A rendering programmer Ben Archard and the developer's CTO, Oles Shishkovstov.

What are some of the larger changes in terms of features in the 4A Engine between the Metro Redux releases and Metro Exodus? Just looking at Metro Exodus it seems like a lot of modern features we are seeing this generation are there in a very refined form, and effects which the 4A engine previously pioneered - physically-based materials, global volumetrics, object motion blur on consoles, extensive use of parallax mapping/tessellation, lots of GPU particles, etc.

Ben Archard: A load of new features and a conceptual shift in the way we approach them. Stochastic algorithms and denoising are now a large focus for rendering. We'll start with the stochastic algorithms because they get used in a lot of different features and it is kind of an umbrella term for a few techniques.

Let's say you have some large and complicated system that you are trying to model and analyse, one that has a huge number of individual elements (way too much information for you to reasonably keep track of). You can either count up literally every point of data and draw your statistical conclusions the brute force way, or you can randomly select a few pieces of information that are representative of the whole. Think of doing a random survey of people in the street, or a randomised medical test of a few thousand patients. You use a much smaller set of values, and although it won't give you the exact data you would get from checking everyone in those situations, you still get a very close approximation when you analyse your results. The trick, in those examples, is to make sure that you pick samples that are well distributed so that each one is genuinely representative of a wide range of people. You get basically the same result but for a lot less effort spent in gathering data. That's the Monte Carlo method in a nutshell.

Tied to that, the other main part of stochastic analysis is some randomisation. Of course, we don't do anything truly randomly, and nor would we want to. A better way of putting it is the generation of sample noise or jittering. The reason noise is important is because it breaks up regular patterns in whatever it is that you are sampling, which your eyes are really good at spotting in images. Worst case, if you are sampling something that changes with a frequency similar to the frequency you are sampling at (which is low because of the Monte Carlo) then you can end up picking results that are undesirably homogeneous, and you can miss details in between. You might only pick bright spots of light on a surface for example, or only the actual metal parts in a chain link fence. So, the noise breaks up the aliasing artifacts.

The problem is that when you try to bring your number of samples right down, sometimes to one or less per pixel, you can really see the noise. So that is why we have a denoising TAA. Any individual frame will look very noisy, but when you accumulate information over a few frames and denoise as you go then you can build up the coverage you require. I'll reference your recent RE2 demo analysis video when you capture a frame immediately after a cutscene, where there is only one frame of noisy data to work with. You will also see it in a lot of games where you move out from a corner and suddenly a lot of new scene information is revealed, and you have to start building from scratch. The point I am trying to make here is why we (and everyone else) have generally opted for doing things this way and what the trade-off is. You end up with a noisier image that you need to do a lot of work to filter, but the benefits are an image with less aliasing and the ability to calculate more complex algorithms less often.

So that is sort of the story of a lot of these modern features. They are really complicated to calculate, and they have a lot of input data, so we try to minimise the number of times we actually calculate them and then filter afterwards. Now, of course, computer graphics is replete with examples of situations where you have a huge amount of data that you want to estimate very closely, but with as few actual calculations as possible. Ray tracing is an obvious example because there are way more photons of light than the actual number of rays we cast.

Other places we use it are for hair where there are more fine strands than you would like to spend geometry on, all of which are too small for individual pixels. It's used in a lot of images sampling techniques like shadow filtering to generate the penumbra across multiple frames. Also, in screen-space reflections, which is effectively a kind of 2D ray tracing. We use depth jitter in volumetric lighting: with our atmospheric simulation we integrate across regular depth values to generate a volume texture. Each voxel as you go deeper into the texture builds up on those before, so you get an effective density of fog for a given distance. But of course, only having volume texture that is 64 voxels deep to cover a large distance is pretty low fidelity so you can end up with the appearance of depth planes. Adding in some depth jitter helps break this up.

Regular, traditional screen-space ambient occlusion is another technique that works by gathering a lot of samples from the surrounding depth buffer to estimate how much light is blocked from a given pixel. The number of pixels you have to sample to get good data increases with the square of the distance out to which you want the pixel to be affected. So, cutting down on the number of samples here is very important, and again noisy AO can be filtered from frame to frame. Incidentally that is one of (and not the only one of) the reasons why AO is going to have to go the ray tracing route in the future. The sheer range at which objects can directly affect occlusion gets so high with RT that it eventually just become infeasible to accurately sample enough pixels out to that radius. And that's before we get into the amount of information that is lost during depth buffer rasterization or from being off screen.

So yes, a major focus of the renderer has been shifted over to being more selective with when we perform really major complex calculations and then devoting a large amount of frame time to filtering, denoising and de-aliasing the in final image. And this comes with the benefit of allowing those calculations (which we do less frequently) to be much more sophisticated.

This is a link to an ancient (1986) paper by Robert Cook. It's in reasonably plain English and it's a really good read. It shows where a lot of this thinking comes from. This was state of the art research for offline rendering 30 years ago. As you read it, you'll be struck by exactly how much of it parallels what we are currently working towards in real-time. A lot of it is still very relevant and as the author said at the time, the field of denoising was an active area of research. It still is and it is where most of the work on RTX has been. Cook was working to the assumption of 16rpp (rays per pixel), which we can't afford yet but hopefully will be if the tech gets its own Moore's Law. That said I doubt they had any 4K TVs to support. Even so it's the improvements in denoising that are letting us do this with less than 1rpp.

Another big improvement is that we have really upgraded the lighting model. Both in terms of the actual calculation of the light coming from each light source, and in terms of how we store and integrate those samples into the image. We have upgraded to a full custom GGX solution for every light source, a lot of which are attenuated by stochastically filtered shadow maps, for more and nicer shadows, than the previous games. We also use a light clustering system, which stores lights in a screen-aligned voxel grid (dimensions 24x16x24). In each grid we store a reference to the lights that will affect anything in that grid. Then when we process the image in the compute shader, we can take each output pixel's view space position, figure out which cluster it is in, and the apply only the lights that affect that region of the screen.

Now, we have always had a deferred pipeline for opaque objects, which creates a g-buffer onto that lights are accumulated afterwards. But we also had and a forward section for blended effects that didn't have access to all the lighting data. Having all of the lights stored like this allows us to now have the forward renderer fully support all lights so particles and hair and water and the like can all be lit as though they were rendered in full defer. These clusters also pack in all of the information about every type of light, including shadowed/unshadowed, spot, omni-directional, and the new light-probes. We just do dynamic branching in the shader based on which light flags are stored in the cluster buffer.

We have a high precision (FP16) render option for forward objects now too. And another option to have forward rendered effects alter the screen-space velocities buffer for more accurate motion blur on alpha blended objects. Also, our forward pass is now done at half-resolution but at 4x MSAA (where supported). This gives you the same number of samples, so you lose less information when you upscale, but rasterisation and interpolation is shared across the four samples of each pixel.

The last releases of Metro on console targeted, and impressively kept, a very stable 60fps. Metro Exodus is targeting 30fps on consoles this time around. Beyond rendering features localised to the GPU, where are additional CPU cycles from that 30fps target being spent on console?

Ben Archard: The open world maps are completely different to the enclosed tunnels maps of the other games. Environments are larger and have way more objects in them, visible out to a much greater distance. It is therefore a lot harder to cull objects from both update and render. Objects much further away still need to update and animate. In the tunnels you could mostly cull an object in the next room so that only its AI was active, and then start updating animations and effects when it became visible, but the open world makes that a lot trickier.

Lights in the distance need to run a shadow pass. Higher quality scenes with dynamic weather systems mean a greater abundance of particle effects. Procedural foliage needs to be generated on the fly as you move around. Terrain needs to be dynamically LODded. Even where distant objects can get collapsed into imposters, there are so more distant objects to worry about.

So, a good chunk of that extra time is spent with updating more AIs and more particles and more physics objects, but also a good chunk of time is spent feeding the GPU the extra stuff it is going to render. We do parallelise it where we can. The engine is built around a multithreaded task system. Entities such as AIs or vehicles, update in their own tasks. Each shadowed light, for example, performs its own frustum-clipped gather for the objects it needs to render in a separate task. This gather is very much akin to the gathering process for the main camera, only repeated many times throughout the scene for each light. All of that needs to be completed before the respective deferred and shadow map passes can begin (at the start of the frame).

So, I guess a lot of the extra work goes into properly updating the things that are there in an open world that you can't just hide behind a corner out of sight. And a lot goes into the fact that there are just more things able to be in sight.

With the release of DXR GI on PC we have to recall our discussions a few years back about real time global illumination (rough voxilisation of the game scene was mentioned back then as a possible real time solution for GI). What type of GI does Metro Exodus use on consoles currently? Does DXR GI have an influence on where 4A engine might go for next generation consoles?

Ben Archard: We use spherical harmonics grid around the camera which is smoothly updated from latest RSM data each frame. Plus a bunch of light-probes. It is relatively cheap solution and quite good in many cases, but it can leak lighting, and is too coarse to get something even remotely looking like indirect shadows. If next-gen consoles would be good at tracing the rays we would be completely "in".

Yes. Consoles and PC use that GI method as standard for now. The method is heavily influenced by radiance hints (G. Papaionnou). The general process involves taking a 32x16x32 voxel grid (or three of them of RGB) around the camera, and for each voxel storing a spherical harmonic which encodes some colour and directional properties. We populate the grid with data from a collection of light probes and the reflective shadow map (RSM) that is generated alongside the sun's second shadow cascade. Effectively we render the scene from the sun's perspective as with a normal shadow map, but this time we also keep the albedos (light reflected) and normals (to calculate direction of reflection). This is pretty much the same things we do during g-buffer generation.

At GI construction time, we can take a number of samples from these RSMs for each voxel to get some idea of what light reaches that voxel and from which directions. We average these samples to give us a kind of average light colour with a dominant direction as it passes through the voxel. Sampling within the voxel then gives us (broadly speaking) a sort of small directional light source. We maintain history data (the voxel grids from previous frames) for four frames in order to accumulate data smoothly over time. And, yes, we also have some jitter in the way we sample the voxel grid later when it is being used for light accumulation.

It is a relatively cheap and effective solution, but the first thing to note is that a 32x16 texture across the screen is not a great deal of information so the technique is very low fidelity. If you imagine the amount of information you could store in a shadow map of that size (or really even smaller) it is clear that it is too coarse to approximate something that even remotely looking like indirect shadows. It also can have some light leaking issues. Of course, it has already become the outdated stop-gap because really, we want to do this with RT now and if next-gen console can support RT then we would be completely "in".

Let's talk about ray tracing on next-gen console hardware. How viable do you see it to be and what would alternatives be if not like RTX cards we see on PC? Could we see a future where consoles use something like a voxel GI solution while PC maintains its DXR path?

Ben Archard: it doesn't really matter - be it dedicated hardware or just enough compute power to do it in shader units, I believe it would be viable. For the current generation - yes, multiple solutions is the way to go.

This is also a question of how long you support a parallel pipeline for legacy PC hardware. A GeForce GTX 1080 isn't an out of date card as far as someone who bought one last year is concerned. So, these cards take a few years to phase out and for RT to become fully mainstream to the point where you can just assume it. And obviously on current generation consoles we need to have the voxel GI solution in the engine alongside the new RT solution. RT is the future of gaming, so the main focus is now on RT either way.

In terms of the viability of RT on next generation consoles, the hardware doesn't have to be specifically RTX cores. Those cores aren't the only thing that matters when it comes to ray tracing. They are fixed function hardware that speed up the calculations specifically relating to the BVH intersection tests. Those calculations can be done in standard compute if the computer cores are numerous and fast enough (which we believe they will be on the next gen consoles). In fact, any GPU that is running DX12 will be able to "run" DXR since DXR is just an extension of DX12.

Other things that really affect how quickly you can do ray tracing are a really fast BVH generation algorithm, which will be handled by the core APIs; and really fast memory. The nasty thing that ray tracing does, as opposed to something like say SSAO, is randomly access memory. SSAO will grab a load of texel data from a local area in texture space and because of the way those textures are stored there is a reasonably good chance that those texels will be quite close (or adjacent) in memory. Also, the SSAO for the next pixel over will work with pretty much the same set of samples. So, you have to load far less from memory because you can cache and awful lot of data.

Working on data that is in cache speeds things up a ridiculous amount. Unfortunately, rays don't really have this same level of coherence. They can randomly access just about any part of the set of geometry, and the ray for the next pixels could be grabbing data from and equally random location. So as much as specialised hardware to speed up the calculations of the ray intersections is important, fast compute cores and memory which lets you get at you bounding volume data quickly is also a viable path to doing real-time RT.

When we last spoke, we talked about DirectX 12 in its early days for Xbox One and PC, even Mantle which has now been succeeded by Vulkan. Now the PC version of Metro Exodus supports DX12. How do low-level APIs figure into the 4A engine these days? How are the benefits from them turning out for the 4A engine, especially on PC?

Ben Archard: Actually, we've got a great perf boost on Xbox-family consoles on both GPU and CPU thanks to DX12.X API. I believe it is a common/public knowledge, but GPU microcode on Xbox directly consumes API as is, like SetPSO is just a few DWORDs in command buffer. As for PC - you know, all the new stuff and features accessible goes into DX12, and DX11 is kind of forgotten. As we are frequently on the bleeding edge - we have no choice!

Since our last interview, both Microsoft and Sony have released their enthusiast consoles that pack better GPUs and upclocks on those original CPUs among other performance tweaks (Xbox One X and PS4Pro). What are the differences in resolution and graphical settings from the respective base consoles for Metro Exodus and is the 4A engine leveraging some of the updated feature sets from those newer GPUs (rapid-packed math for example on PS4 Pro)?

Ben Archard: We utilise everything what we can find in the API for GPU at hand. As for FP16 math - it is used only in one compute shader I believe, and mostly for VGPR savings. We have native 4K on Xbox One X and PS4 Pro upscales like other titles.

We've got different quality settings for ray tracing in the final game - what does the DXR settings actually do?

Oles Shishkovstov: Ray tracing has two quality settings: high and ultra. Ultra setting traces up to one ray per pixel, with all the denoising and accumulation running in full. The high setting traces up to 0.5 rays per pixel, essentially in a checkerboard pattern, and one of the denoising passes runs as checkerboard. We recommend high for the best balance between image quality and performance, but please note that we are still experimenting a lot, so this information is valid only at the time of writing.

At Gamescom it was mentioned that the ray tracing for global illumination is done at three rays per pixel, so there have been some big changes then?

Oles Shishkovstov: What we showed at Gamescom was in the infancy of real-time ray tracing. We were in a learning process with a brand-new technology innovation. Ray traced GI happens to be a hard problem - that is why it is usually called "the holy grail"!

The reason why it is a hard problem is that a key part of any global illumination algorithm is the need to cosine-integrate values across the visible hemisphere. We are trying to generate a value for all of the light hitting a point, from all of the possible directions that could hit it (so any direction in a hemisphere surrounding that point). Think of it this way: what we are basically doing, conceptually, it is like rendering a cubemap at each pixel and then cosine-integrating it (adding up all of the values of all of the pixels in that cubemap with some weighting for direction and angle of incidence). What was inside that imaginary "cubemap", we only know after the rendering is complete. That would be the ideal, brute-force way of doing it. In point of fact, reflection maps work in a similar way except that we pre-generate the cubemap offline, share it between millions of pixels and the integration part is done when we generate the LODs. We want a similar effect to what they were designed to achieve but at a much more precise, per-pixel level.

Unfortunately, even a low-res cube map would have thousands of samples for us to add up, but we have one ray (one sample) per pixel to work with. To continue the analogy, imagine adding up the values of a cubemap with mostly black pixels (where we had no information) and one bright pixel. That way breaks down at that point, so we need to come up with other solutions. The saving grace of GI is that you are more interested in low frequency data than high (as you would be for reflections). This is where the stochastic approach saves us. We store our ray value and treat that one sample as being representative of many samples. We weight its importance based on how representative we think it is later. We then have a denoising pass (two actually) on this raw ray data, where we use the importance data, the history data, and the surrounding pixel data to fill in the blanks. That is just to get the ray data ready for light accumulation. We also do a final (third) denoising at the end of the frame along with TAA to clean up the final image.

So, for Gamescom we had three rays. After Gamescom, we rebuilt everything with the focus on high quality denoising and temporal accumulation of ray data across multiple frames. We have a specifically crafted "denoising" TAA at the end of the pipeline, because stochastic techniques will be noisy by nature.

What stand-out optimisations for ray tracing have been implemented - Battlefield 5's ray traced reflections uses a number of tricks like combined raymarching and ray tracing, as well as a variable ray tracing system to limit and maximise rays for where objects are most reflective while maintaining an upper bound of rays shot. Are similar optimisations in for ray traced GI in Metro Exodus? Or is the leveraging of screen-space information or limiting of rays shot based upon a metric not as feasible for something as total, and omnipresent as global illumination?

Oles Shishkovstov: Real-time ray tracing is an exciting new frontier. We are pioneering ray traced GI in games, so we are obviously learning as we go and finding better ways to implement the technology. As you say, it is not reflections, it is GI, and in our case "rough" pixels are as important (if not more so) than "smooth" ones. So, we can't really limit the number of rays or make that number "adaptive" since always need a bare minimum to have something to work with for every pixel. With one sample you can assign an importance value and start making estimates as to how much light is there. If you don't sample anything though, you have no chance. We could be (and are) adaptive at the denoiser level though.

As for screen-space - sure, we do a cheap "pre-trace" running async with BLAS/TLAS (BVHs) update and if the intersection could be found from current depth-buffer - we use it without spawning the actual ray. We also raymarch our terrain (which is essentially heightmap), inside the ray-generation shaders, it happens to be almost free that way due to the nature of how latency hiding works on GPUs.

Another problem for us - our rays are non-coherent by definition of problem. That doesn't help performance. We somewhat mitigate that by tiling a really small pre-computed blue-noise texture across the screen (changed each frame), which is used as cosine-weighted distribution random seed, so even if rays are non-coherent for nearby pixels, as they should be, they are somewhat coherent across the bigger window. That thing speeds up ray tracing itself by about 10 per cent. Not a big deal, but still something.

Reading through Remedy's 4C presentation on its ray tracing in Northlight, and with the context of Battlefield 5 sending at most 40 per cent of screen resolution of rays in a 1:1 ratio for its RT reflections, it would appear that the higher costs of ray tracing on the GPU are not in the ray/triangle intersection portion of it handled mainly in the RT core, but rather in the associated shading. How does this performance balance (ray gen + intersection, shade, denoise, etc) look in Metro Exodus and which part of RT is heaviest in performance on the GPU?

Oles Shishkovstov: Our ray tracing shaders (apart from terrain raymarching) are only searching for the nearest hit and then store it in UAV, there is no shading inside. This way we actually do a "deferred shading" of rays, or more specifically hit positions. It happens to be a right balance of shading/RT work for current hardware. The "deferred shading" is cheap and is not worth mentioning. What is indeed costly is denoising. The less rays we send per pixel, the more costly denoising becomes, as it scales essentially quadratically. A lot of work, ideas and tricks were implemented to make it real-time. It was a multi-people and even multi-company effort with Nvidia's cooperation.

At its core - it is a two-pass stochastic denoiser with recurrent accumulation. It is highly adaptive to variance, visibility, hit distances etc. Again, it doesn't produce a "clean" image by itself in all and every case, but its output noise level is enough to be "eaten" at the end of pipe's denoising TAA. As for perf split: ray tracing itself and denoising are about the same performance cost in most scenes. What other people rarely talk about - there is another performance critical thing. It is BVH (BLAS) updates which are necessary for vertex-animated stuff, plus BVH (TLAS) rebuilds necessary to keep the instance tree compact and tight. We throttle it as much as we can. Without all that its cost would be about on par with 0.5 RPP trace if not more.

What were challenges in optimising RT and what are future optimisation strategies you would like to investigate?

Oles Shishkovstov: Not that ray tracing related, it is more like common PC issue: profiling tools are the biggest problem. To optimise something, we should find the bottleneck first. Thank god (and HW-vendors) tools are slowly improving. In general, real-time ray tracing is new and we need a lot more of industry wide research. We will share our knowledge and findings at GDC 2019 and I believe others will share theirs - the graphics research community loves sharing!

A general follow-up question: are there any particular parts of the RT implementation you are proud of/or that excite you? We would love to hear.

Oles Shishkovstov: Ray tracing light turned out very nice in the game. It feels very immersive for players. Also, the way we store, accumulate and filter irradiance, the space in which we do that - it is directional. Not only that gives us sharp response to normal map details, it improves contact detail and indirect shadows. Best of all - it allows us to reconstruct a fairly great approximation of indirect specular.

4A Games, many thanks for your time.