Games are squandering their potential to truly immerse us

To create truly believable worlds, they need to let go of what's safe.

More than any other medium, video games have the power to truly immerse us in another world. Games can give us something very few entertainment forms can - a sense of agency. Middle Earth (or Gotham, or Tattoine) may be compelling on the big screen or on the page, but a world can only be real if you can change it - If you can poke it and prod it, or if you can make choices and experience the consequences.

Immersion comes in different flavours, and different shapes - the abstract spaces of Jeff Minter's Space Giraffe and TxK have just as much power as the chilly expanses of Skyrim - and, for me, it's one of the greatest powers games have. It's something they do better than almost anything else - and that's why it's such a shame to see so many games squander this potential so cheaply.

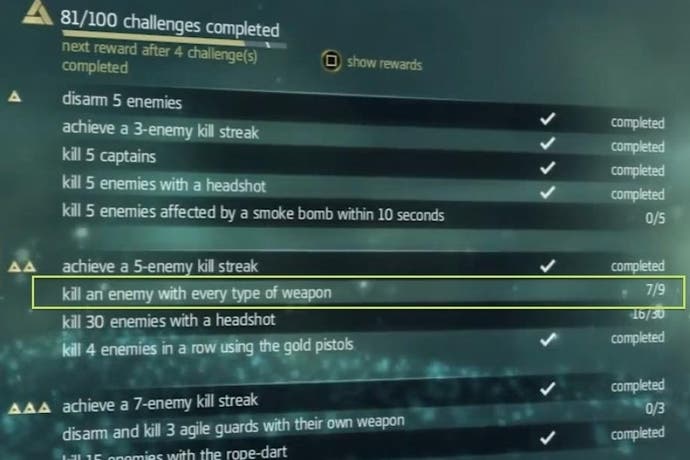

Collectibles are the greatest sinner. About half of most contemporary games seem to involve stuffing multifarious bits of crap into a magic backpack, like an episode of hoarders starring Mary Poppins. Charitably you could say this is an easy way of getting players to explore the world, rather than just mainlining the story. Uncharitably, it's a way of stuffing a thin plot with 20 hours of pointless busywork.

Either way, offering all these gewgaws, gimcracks, and whatchamacallits has consequences. Done poorly it doesn't so much hamper player immersion as take it down to the woodshed and beat it with a length of (collectible) hose. It constantly sets up ridiculous scenarios in which your goals as a player are completely at odds with those of your in-game character - you're desperately sneaking out of an enemy base before they hang you at dawn, but oh look there's a pot plant over behind that guard that will bring you up to 80/530 magic petunias.

Is it worth getting so het up about? Probably not, but it exemplifies a general trend that has come to dominate modern game design (at least of the big budget variety). It sees immersion - the ability of the player to lose themselves in the world you've created - coming a distant second to more mundane considerations like accessibility, convenience, and sheer volume of content.

It's where our old friend ludonarrative dissonance comes in. As ugly and well-worn as the term may be, it represents an idea that caught on because it's a simple, obvious way in which the fragile spell of immersion can be broken by prioritising traditional gameplay 'fun' (which usually involves a certain amount of homicidal activity). There's still so often a friction between play and story - most famously in Uncharted, and addressed gently by The Last of Us - that violates the most basic requirement of an involving narrative.

Failure to consider the player's immersion in the experience also affects every other decision in the game, right down to things like how the music cues work. One of the many pleasures of Far Cry 3 was creeping through the jungle lining up mercenary headshots (alright, picking flowers), knowing that, at any moment, a tiger could jump on my head. But this only really worked if I disabled all the music in the game. Otherwise an entire string section would leap to Defcon 5 every time anything more aggressive than a butterfly so much as farted in my direction.

You can see how such decisions are made. Immersion is a woolly concept, difficult to pin down and measure. Excitement, accessibility, hours of play - these are all more solid. Easier to count, or test with a focus group. It would take a firm authorial hand to make sure all the little decisions are pulling in the same direction, from the music to the save system to the collectibles. This is incredibly difficult for most big games, where the guy implementing the music cues and the girl in charge of the combat encounters might not even be in the same country, let alone the same room.

Nevertheless, this is what games need. Without it you end up with situations like the one Ellie picked up on in her Tomb Raider review. "As Lara emerges into the light, the sun breaks through the clouds, the orchestra swells and the island is revealed in all its rugged beauty," she wrote. "It is a wonderful moment of relief and revelation, spoiled by an onscreen message shouting "ART GALLERY UNLOCKED MAIN MENU/EXTRAS" "

Such a small thing - an alert for an unlock. Compared to the loss of the moment, the gain from showing the player this message is tiny. But this decision was made because no one was looking at the message and the scene together and thinking about how the one affected the other. It's not like it can't be done. There's Dark Souls, of course - there's always Dark Souls - a game from a team of more than 200 people, which nevertheless feels as cohesive as if it were designed by a lone bedroom coder.

It's not asking for the impossible, and we certainly shouldn't forget about accessibility, convenience, or fun. These are all good things - they matter! - but they are not the only things that matter. They should be properly weighed and balanced against the cost they extract from more subtle pleasures. The choice is simple enough: games can make you either a participant in a living world, or merely a cleaner, mindlessly hoovering up all the content before drably moving on.

-3-31-23-screenshot.png?width=291&height=164&fit=crop&quality=80&format=jpg&auto=webp)