Inside PlayStation 4 Pro: How Sony made the first 4K games console

"Power in and of itself is not a goal. The question is, what that power makes possible."

Six weeks on from the unveiling of the Sony's latest console and I'm in a conference room in Sony's new San Mateo HQ, revisiting the bulk of the PlayStation 4 Pro titles unveiled so far, accompanied by system architect Mark Cerny. It's a chance to confirm that the new hardware is indeed delivering the high quality 4K gaming experience I witnessed last month, but more to the point, this is where we find out how Sony has managed to accomplish this achievement - how it has deployed a relatively slight 4.2 teraflops of GPU power in such a way that makes PS4 Pro a viable console for an ultra HD display.

"When we design hardware, we start with the goals we want to achieve," says Cerny. "Power in and of itself is not a goal. The question is, what that power makes possible."

What becomes clear is that Sony itself - perhaps unlike its rival - does not believe that the concept of the console hardware generation is over. Cerny has a number of criteria he believes amounts to a reset in gaming power: primarily, a new CPU architecture and vastly increased memory allocation. And of course, a massive revision in GPU power - Cerny refers to a 434 page, eight-hour PowerPoint presentation he gave to developers about the PS4 graphics core. It was a new paradigm for game makers.

By all of these criteria, PS4 Pro is not a new generation of hardware and there are no 400 page briefings. Sony's new console is an extension to the existing model - the means by which games for the existing PS4 can be optimised to look great on the new range of 4K display hardware, while at the same time offering an enhanced experience for owners of existing HDTVs. It's the same generation - and that's most telling in the actual effort developers will need to put into developing Pro software.

"As a mid-generation release, we knew that whatever we did needed to require minimal effort from developers," Cerny explains. "We showed Days Gone running on PS4 Pro at the New York event. That work was small enough that a single programmer could do it. In general our target was to keep the work needed for PS4 Pro support to a fraction of a per cent of the overall effort needed to create a game, and I believe we have achieved that target."

| Base PS4 | PS4 Pro | Boost | |

|---|---|---|---|

| CPU | Eight Jaguar cores clocked at 1.6GHz | Eight Jaguar cores clocked at 2.1GHz | 1.3x |

| GPU | 18 Radeon GCN compute units at 800MHz | 36 improved GCN compute units at 911MHz | 2.3x FLOPs |

| Memory | 8GB GDDR5 at 176GB/s | 8GB GDDR5 at 218GB/s | 24% more bandwidth, 512MB more useable memory |

The end result is a smart design that may seem somewhat conservative bearing in mind the stark reality that a 4K display is effectively running 4x the pixels of a standard HDTV. But in meeting that challenge, PS4 Pro has to run the existing 700 plus range of PS4 titles flawlessly - a key requirement of the base hardware that had a profound influence on the design of the new console.

"First, we doubled the GPU size by essentially placing it next to a mirrored version of itself, sort of like the wings of a butterfly. That gives us an extremely clean way to support the existing 700 titles," Cerny explains, detailing how the Pro switches into its 'base' compatibility mode. "We just turn off half the GPU and run it at something quite close to the original GPU."

In Pro mode, the full GPU is active, and running at 911MHz - a 14 per cent bump in frequency, turning a 2x boost in GPU power to a 2.24x increase. However, CPU doesn't receive the same increase in raw capabilities - and Sony believes that interoperability with the existing PS4 is the primary reason for sticking with the same, relatively modest Jaguar CPU clusters.

"For variable frame-rate games, we were looking to boost the frame-rate. But we also wanted interoperability. We want the 700 existing titles to work flawlessly," Mark Cerny explains. "That meant staying with eight Jaguar cores for the CPU and pushing the frequency as high as it would go on the new process technology, which turned out to be 2.1GHz. It's about 30 per cent higher than the 1.6GHz in the existing model."

But surely x86 is a great leveller? Surely upgrading the CPU shouldn't make a difference - after all, it doesn't on PC. It simply makes things better, right? Sony doesn't agree in terms of a fixed platform console.

"Moving to a different CPU - even if it's possible to avoid impact to console cost and form factor - runs the very high risk of many existing titles not working properly," Cerny explains. "The origin of these problems is that code running on the new CPU runs code at very different timing from the old one, and that can expose bugs in the game that were never encountered before."

But what about deploying the additional Pro GPU power in base PS4 mode, similar to the Xbox One S? Or even just retaining the 111MHz GPU frequency boost? For Sony, it's all about playing it safe, to ensure that the existing 700 titles just work.

"I've done a number of experiments looking for issues when frequencies vary and... well... [laughs] I think first and foremost, we need everything to work flawlessly. We don't want people to be conscious of any issues that may arise when they move from the standard model to the PS4 Pro."

But as close as the core architecture is - necessarily, it seems - to the base PS4, the Pro does indeed feature a host of enhancements. Of course, more memory is required.

"We felt games needed a little more memory - about 10 per cent more - so we added a gigabyte of slow, conventional DRAM to the console," Cerny reveals, confirming that it's DDR3 in nature. "On a standard model, if you're switching between an application, such as Netflix, and a game, Netflix is still in system memory even when you're playing the game. We use that architecture because it allows for a very quick swap between applications. Nothing needs to be loaded, it's already in memory."

Some might say it's an extravagant use of the PS4's fast GDDR5 memory, so the extra DDR3 memory in the Pro is used to store non-critical apps, opening up more RAM for game developers.

"On PS4 Pro, we do things differently, when you stop using Netflix, we move it to the slow, conventional gigabyte of DRAM. Using that strategy frees up almost one gigabyte of the eight gigabytes of GDDR5. We use 512MB of that freed up space for games, which is to say that games can use 5.5GB instead of the five and we use most of the rest to make the PS4 Pro interface - meaning what you see when you hit the PS button - at 4K rather than the 1080p it is today."

Game developers can use the memory as they see fit, but in most cases, it will be handling the 4K render targets and buffers required, based on the exact same base assets as seen on the PS4 version - so it's unlikely that the 4K texture packs we've seen for the likes of Rise of the Tomb Raider, Far Cry Primal and many others will fit into the available space. Cerny says that these assets can cost "millions of dollars" and doesn't fit into the core PS4 Pro ethos - cheap, easy 4K support for developers.

To accommodate ultra HD rendering, there are obviously boosts to the GPU too, and they hail from AMD's architectures of the present and indeed the future, just as Cerny said at the PlayStation Meeting last month.

"Polaris is a very energy efficient GPU architecture that lets us boost the GPU power pretty dramatically, while keeping the console form-factor roughly the same. DCC - which is short for delta colour compression - is a roadmap feature that's been improved for Polaris. It's making its PlayStation debut in PS4 Pro," Mark Cerny shares, confirming in the process that this feature was not implemented in the standard PS4 model.

"DCC allows for inflight compression of the data heading towards framebuffers and render targets which results in a reduction in the bandwidth used to access them. Since our GPU power has increased more than our bandwidth, this has the potential to be extremely helpful."

Other elements of Polaris also feature in the PlayStation 4 Pro, though their deployment may have a slightly different feature set than the discrete GPU equivalent.

"The primitive discard accelerator improves the efficiency with which triangles which are too small to affect the rendering are removed from the pipeline. It's easier to turn on and the speed increases can be quite noticeable, particularly if MSAA is being used," Cerny explains.

"Finally, there's better support of variables such as half-floats. To date, with the AMD architectures, a half-float would take the same internal space as a full 32-bit float. There hasn't been much advantage to using them. With Polaris though, it's possible to place two half-floats side by side in a register, which means if you're willing to mark which variables in a shader program are fine with 16-bits of storage, you can use twice as many. Annotate your shader program, say which variables are 16-bit, then you'll use fewer vector registers."

The enhancements in PS4 Pro are also geared to extracting more utilisation from the base AMD compute units.

"Multiple wavefronts running on a CU are a great thing because as one wavefront is going out to load texture or other memory, the other wavefronts can happily do computation. It means your utilisation of vector ALU goes up," Cerny shares.

"Anything you can do to put more wavefronts on a CU is good, to get more running on a CU. There are a limited number of vector registers so if you use fewer vector registers, you can have more wavefronts and then your performance increases, so that's what native 16-bit support targets. It allows more wavefronts to run at the same time."

We also gained some insight into how AMD's semi-custom hardware design relationships actually work. Up until now, the perception has been that console APUs are actually an assemblage of off-the-shelf AMD parts with limited modification. After all, PS4's GPU looks a lot like the Pitcairn design that debuted in the Radeon HD 7850 and 7870. The Xbox One equivalent bears more than a passing relationship to the Bonaire processor that debuted in the Radeon HD 7790.

"You may later on see something that looks very much like a console GPU as a discrete GPU, but that's then being very familiar with the design and taking inspiration from the console GPU. So the similarity, if you see one, is actually the reverse of what you're thinking," Cerny explains, saying that console designs are 'battle-tested' and thus easier to deploy as discrete GPU products.

"A few AMD roadmap features are appearing for the first time in PS4 Pro," Cerny continues, giving a broad overview of how the semi-custom relationship functions.

"How it works is that we sit down with AMD, who are terribly collaborative. It's a real pleasure to work with them. So basically, we go ahead and say how many CUs we want to have and we look at the roadmap features and we look at area and we make some decisions and we even - in this case - have the opportunity, from time to time, to have a feature in our chip before it's in a discrete GPU. We have two of these this time, which is very nice."

And that work feeds back into the Radeon discrete products too, useful in maintaining consistency between PC and console games development. Asynchronous compute, for example, has had a huge benefit for AMD on PC DX12 applications, specifically because of the additional hardware scheduling pipelines championed by Mark Cerny in the PS4 design.

"We can have custom features and they can eventually end up on the [AMD] roadmap," Cerny says proudly. "So the ACEs... I was very passionate about asynchronous compute, so we did a lot of work there for the original PlayStation 4 and that ended up getting incorporated into subsequent AMD GPUs, which is nice because the PC development community gets very familiar with those techniques. It can help us when the parts of GPUs that we are passionate about are used in the PC space."

In actual fact, two new AMD roadmap features debut in the Pro, ahead of their release in upcoming Radeon PC products - presumably the Vega GPUs due either late this year or early next year.

"One of the features appearing for the first time is the handling of 16-bit variables - it's possible to perform two 16-bit operations at a time instead of one 32-bit operation," he says, confirming what we learned during our visit to VooFoo Studios to check out Mantis Burn Racing. "In other words, at full floats, we have 4.2 teraflops. With half-floats, it's now double that, which is to say, 8.4 teraflops in 16-bit computation. This has the potential to radically increase performance."

A work distributor is also added to the GPU design, designed to improve efficiency through more intelligent distribution of work.

"Once a GPU gets to a certain size, it's important for the GPU to have a centralised brain that intelligently distributes and load-balances the geometry rendered. So it's something that's very focused on, say, geometry shading and tessellation, though there is some basic vertex work as well that it will distribute," Mark Cerny shares, before explaining how it improves on AMD's existing architecture.

"The work distributor in PS4 Pro is very advanced. Not only does it have the fairly dramatic tessellation improvements from Polaris, it also has some post-Polaris functionality that accelerates rendering in scenes with many small objects... So the improvement is that a single patch is intelligently distributed between a number of compute units, and that's trickier than it sounds because the process of sub-dividing and rendering a patch is quite complex."

Beyond that, we're moving into the juicy stuff - the custom hardware that Sony has introduced, elements of the 'secret sauce' that allow the Pro graphics core to punch so far above its weight. In creating 4K framebuffers, a lot of the technological underpinnings are actually based on advanced anti-aliasing work with the creation of new buffers that can be exploited in a number of ways.

Right now, post-process anti-aliasing techniques like FXAA or SMAA have their limits. Edge detection accuracy varies dramatically. Searches based on high contrast differentials, depth or normal maps - or a combination - all have limitations. Sony had fashioned its own, highly innovative solution.

"We'd really like to know where the object and triangle boundaries are when performing spatial anti-aliasing, but contrast, Z [depth] and normal are all imperfect solutions," Cerny says. "We'd also like to track the information from frame to frame because we're performing temporal anti-aliasing. It would be great to know the relationship between the previous frame and the current frame better. Our solution to this long-standing problem in computer graphics is the ID buffer. It's like a super-stencil. It's a separate buffer written by custom hardware that contains the object ID."

It's all hardware based, written at the same time as the Z buffer, with no pixel shader invocation required and it operates at the same resolution as the Z buffer. For the first time, objects and their coordinates in world-space can be tracked, even individual triangles can be identified. Modern GPUs don't have this access to the triangle count without a huge impact on performance.

"As a result of the ID buffer, you can now know where the edges of objects and triangles are and track them from frame to frame, because you can use the same ID from frame to frame," Cerny explains. "So it's a new tool to the developer toolbox that's pretty transformative in terms of the techniques it enables. And I'm going to explain two different techniques that use the buffer - one simpler that's geometry rendering and one more complex, the checkerboard."

Geometry rendering is a simpler form of ultra HD rendering that allows developers to create a 'pseudo-4K' image, in very basic terms. 1080p render targets are generated with depth values equivalent to a full 4K buffer, plus each pixel also has full ID buffer data - the end result is that via a post-process, a 1080p setup of these 'exotic' pixels can be extrapolated into a 4K image with support for alpha elements such as foliage and storm fences (albeit at an extra cost). On pixel-counting, it would resolve as native 4K image, with 'missing' data extrapolated out using colour propagation from data taken from the ID buffer. However, there is a profound limitation.

"The pixel shader invocations don't change so texture resolution doesn't change," Cerny explains, pointing to blurry, 1080p-grade texture detail on some Infamous First Light images. "And specular effects don't change. But it does improve image quality pretty dramatically."

The cost is very low and our take on this is that it could help super-sampling scenarios at 1080p, but Mark Cerny points out another potential application. Titles rendering at 900p on base PS4 hardware could get a bump to 1080p using some of the extra power, then geometry rendering could boost them to 4K. From the InFamous demo I saw, there is a big boost to clarity but it's not akin to the complete, native 4K package. Checkerboarding is another matter.

Checkerboarding up to full 4K is more demanding and requires half the basic resolution - a 1920x2160 buffer - but with access to the triangle and object data in the ID buffer, beautiful things can happen as technique upon technique layers over the base checkerboard output.

"First, we can do the same ID-based colour propagation that we did for geometry rendering, so we can get some excellent spatial anti-aliasing before we even get into temporal, even without paying attention to the previous frame, we can create images of a higher quality than if our 4m colour samples were arranged in a rectangular grid... In other words, image quality is immediately better than 1530p," Cerny explains earnestly.

"Second, we can use the colours and the IDs from the previous frame, which is to say that we can do some pretty darn good temporal anti-aliasing. Clearly if the camera isn't moving we can insert the previous frame's colours and essentially get perfect 4K imagery. But even if the camera is moving or parts of the scene are moving, we can use the IDs - both object ID and triangle ID to hunt for an appropriate part of the previous frame and use that. So the IDs give us some certainty about how to use the previous frame. "

So how does geometry rendering vs checkboarding work out in terms of their plus and minus points?

"[With] checkerboard rendering, the first two pluses are the same: crisp edges, detailed foliage, storm fences, but also, increased detail in textures, increased detail in specular effects," Mark Cerny explains. "But we're doubling pixel shader workload, there are other overheads as well and it may not be possible to from 1080p native all the way up to 2160p checkerboard."

What's clear is that geometry rendering is cheaper and a single developer can get a solution up and running within a few days. Checkerboarding is more intense in many respects and requires more work - a few weeks. And there are knock-on effects. Temporal anti-aliasing needs tuning, something I saw on the Horizon Zero Dawn demo at the PlayStation Meeting last month.

"The point though is that these are techniques that can be implemented for a fraction of a per cent for the overall budget for the title," Cerny concludes - and with sample code supplied to developers, there should be relatively few issues in all future titles supporting PS4 Pro. So how are the New York titles I saw last month shaping up? What are the techniques deployed there?

Uncharted 4 is undergoing retooling ("they're taking another look at rendering strategies," says Cerny) but of the 13 games revealed, nine used checkerboarding. Days Gone, Call of Duty Infinite Warfare, Rise of the Tomb Raider and Horizon Zero Dawn all render up to 2160p with checkerboarding, super-sampling down to 1080p on full HD displays, while the Lara Croft title has multiple modes with explicit 1080p support. Mark Cerny is keen to point out that developers are free to use the checkerboarding tech as they see fit, so we will see many different variations and interpretations.

"Watch Dogs 2, Killing Floor 2, InFamous and Mass Effect Andromeda all use 1800p checkerboarding," Cerny tells me, and there's potentially some pretty awesome news for 1080p screen owners too. "Super-sampling again is very popular for HDTV support. Mass Effect Andromeda has two very different strategies. They have checkerboard for 4K and they have a separate mode for high quality graphics at 1080p."

From 1800p though, developers can use a software scale with 2160p HUD and menu elements, or stick to 1800p and allow the hardware to scale up to 4K. Deus Ex Mankind Divided is a curious title. It too uses checkerboard rendering but it also adopts a dynamic framebuffer. Resolution varies between 1800p and 2160p based on scene complexity. There's only one screenshot available so far and we count that at 3360x1890.

"So nine of the 13 titles are checkerboard. Of the four that are not, Shadow of Mordor uses native rendering at dynamic resolution. The resolution can vary broadly but generally it's 80 to 90 per cent of 4K," Cerny continues. "The patch to Paragon has a mode for HDTVs with 1080p native rendering and many enhancements to visuals including motion blur, procedural ground cover, special effects like these god rays... on a 4K TV you simply see an upscaled version of the 1080p image."

However, upscaled 1080p won't be too common, with Sony affirming that supporting higher resolution ultra HD screens is strongly encouraged.

"Going forward we are highly encouraging direct support for 4K TVs and HDTVs, though we leave the specifics of how they do that up to the development community," Cerny continues. "They know best, but we really do want to see a higher resolution mode for 4K TVs and then some technique for HDTVs. It can be just scaling down from a higher resolution."

Already we are seeing developers adopt their own take on 4K presentations. Both the upcoming Spider-Man and For Honor use four million jittered samples to produce what the developers believe to be a superior technique compared to checkerboarding, with a similar computational cost. But the unique ID buffer Sony provides could still prove instrumental to the success of these techniques.

"The ID buffer will integrate with any of these techniques, even native rendering," Cerny continues. "You'd use the ID buffer then to get temporal and spatial anti-aliasing. It layers with everything."

Demos followed and they were just as good as they were at the PlayStation Meeting. In fact they were better as we got to see much more - like a comparison of native 4K, geometry rendering and checkerboard 4K in InFamous First Light. I also saw Days Gone side-by-side at ultra HD native vs checkerboard, with an artificially low frame-rate limit in place to make the comparison fairer. The comparison really is remarkable - I stood one foot away from a 65-inch Sony ZD9 display and the quality still held up. Checkerboard is a touch softer, but I'd be willing to bet that most wouldn't be able to tell. The bottom line is that the increase in quality over 1080p is vast.

But there was good news for owners of standard HDTVs too. Moire patterns and temporal shimmering disappear in Shadow of Mordor, giving a much cleaner presentation. Rise of the Tomb Raider's unimpressive, pixel-crawling AA solution gives way to a pristine, solid look. PS4 Pro works best with a 4K display, but there are genuine wins here for those with HDTVs and with Paragon, Tomb Raider and Mass Effect Andromeda, it's clear that key developers want to appeal to those perhaps not ready to upgrade to a 4K screen.

But perhaps the biggest takeaway I had from the meeting with Mark Cerny was the insight into how Sony views the console generations. PS4 Pro and Project Scorpio have been seen as the beginning of the end of the jump to a new, more capable wave of hardware in favour of intermediate upgrades. What's clear is that Sony isn't buying into this. Cerny cites incompatibility problems, even moving between x86 CPU and AMD GPU architectures. I came away with the impression that PS5 will be a clean break, an actual generational leap as we know it. I do not feel the same about Project Scorpio, where all the indications are that Microsoft attempts to build its own Steam-like library around the Xbox brand, with games moving with you from one console to the next - and eventually, maybe even to the PC.

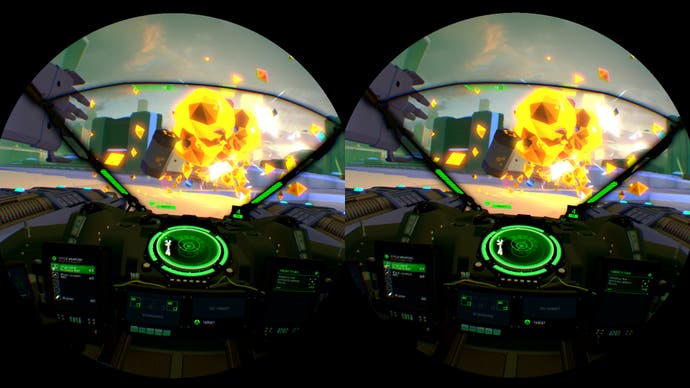

But in the here and now, my meeting with Mark Cerny served two purposes. On the one hand, I saw more of the PlayStation Meeting demos and more of the 1080p enhancements we can expect. On both counts, I'm still impressed by the results of this £350/$399 box. Not every title will be absolutely pristine, but we're seeing good results already and it's great to see developers investigating their own techniques for achieving presentable 4K - I'm really looking forward to seeing how Spider-Man stacks up in particular. And there will be boosts for VR games too, with hardware multi-res support that should improve performance on second-gen PSVR titles.

We'll have more on that - plus other custom hardware features - soon, but in the meantime, the wait continues until we get hands-on with retail hardware and a big bunch of games. It's going to be fun.

Digital Foundry met with Mark Cerny at the PlayStation Campus in San Mateo. Sony paid for travel and accommodation.