We built a PC with PlayStation Neo's GPU tech

Can it deliver a generational leap over PS4?

We're months away from the release of PlayStation Neo and as things stand, with a marketing black-out from Sony, it's still unclear what the purpose actually is for this faster, more powerful console. All we have to go on are leaked developer guidelines, which demand feature parity with the existing PlayStation 4. Beyond that, Sony's recommendations to developers are surprisingly open-ended. All Neo games must render at 1080p or higher at the same performance level - or better. But beyond that, developers get to choose what they do with a mooted 2.3x boost to GPU power.

So we were wondering, just how much of a generational leap does Neo actually represent? Sony's docs focus heavily on supporting 4K displays, but to what extent is that actually possible with the GPU horsepower on tap? We wanted to get an idea of what the new graphics core is actually capable of, so we built our own 'Neo' and put it to the test.

It's not as crazy as it sounds. The leaked graphics specifications for PlayStation Neo are a match for the latest AMD graphics core, codenamed Polaris, released to the public recently in desktop GPU form as the Radeon RX 480. We're looking at 36 compute units based on 'improved' AMD Graphics Core Next (GCN) architecture - just like the RX 480. The difference comes in terms of clock-speed. The RX 480 runs at a maximum 1266MHz while Neo's GPU runs at 911MHz - a necessity for a small, closed box system.

And curiously that clock-speed is baked into RX 480's power management set-up, rounded down to 910MHz. It's 'power state two' - the second of seven power states that can be easily configured by PC users meaning that, yes, we can run RX 480 at the same clocks as the Neo GPU core. Confronted with identical gaming workloads, we can now scale up resolution to see just how far the Neo GPU can go before frame-rates become unplayable. Can we get a playable 4K experience?

On top of that, can we get some idea of the scalability between PS4 and Neo? While there's not a direct equivalent to PlayStation 4's GPU in the Radeon line-up, the Pitcairn GPU found in the Radeon HD 7850, R7 265 and R7 360 is close enough. PS4 has two extra compute units, but they run at 800MHz. We have a Sapphire R7 265 in hand, running at 925MHz - down-clock that to 900MHz and we have a lock with PS4's 1.84 teraflops of compute power. We paired our console surrogate GPUs with a meaty high-end Core i7 6700K PC in order to put GPU performance front and centre, and tried to equalise GPU memory bandwidth as closely as we could - though Neo seems to use 6.6gbps modules and the slowest our RX 480 would run at was 7.0gbps with a 4GB vBIOS in place (check out the impact of memory bandwidth differentials in our recent RX 480 4GB vs 8GB Face-Off - this wouldn't impact our results too much).

In our Face-Offs, we like to get as close a lock as we can between PC quality presets and their console equivalents in order to find the quality sweet spots chosen by the developers. Initially using Star Wars Battlefront, The Witcher 3 and Street Fighter 5 as comparison points with as close to locked settings as we could muster, we were happy with the performance of our 'target PS4' system. The Witcher 3 sustains 1080p30, Battlefront hits 900p60, SF5 runs at 1080p60 with just a hint of slowdown on the replays - just like PS4. We have a ballpark match, and we would expect to see similar on our 'Neo' set-up.

Now, before we go on, we should stress that this obviously isn't a Neo benchmark. We need a Neo for that. The idea of this testing is to compare the same gaming workloads run across Radeon hardware that closely resembles the parts Sony has chosen for its latest consoles. And from there, we can ascertain several things - though scalability across resolutions is our primary concern. Console developers can address GPU hardware more directly of course, but even if we factor out raw compute, there will be constraints in the form of memory bandwidth and crucially, pixel fill-rate. In attempting to simulate a substantial resolution boost for PS4 games, we get some idea of the challenges developers are currently facing.

| PlayStation 4 | Radeon R7 265 | PS Neo | Radeon RX 480 | |

|---|---|---|---|---|

| GPU | 18 Radeon GCN compute units at 800MHz | 16 Radeon GCN compute units at 925MHz | 36 GCN compute units at 911MHz | 36 GCN compute units at max 1266MHz |

| Memory Bandwidth | 8GB GDDR5 at 176GB/s | 2GB GDDR5 at 178GB/s | 8GB GDDR5 at 218GB/s | 4GB GDDR5 at 224GB/s |

| Compute Power As Tested | 1.84TF | Reduce clock to 900MHz for 1.84TF | 4.2TF | Reduce clock to 911MHz for 4.2TF |

Star Wars Battlefront's Endor stage is a testing work-out for the original PS4, operating at 900p with a mixed bag of quality presets - and the 60-70fps we get running the game unlocked on our PC surrogate is broadly similar to what we would expect the console title to hand in were the v-sync lock disabled. Initial results from our self-built 'Neo' are both disappointing and encouraging at the same time. 4K is a write-off - our base PS4 substitute offers a 2.2x frame-rate improvement but at the same time, the fact is that we are powering 5.8x the amount of pixels.

Sony is advocating a number of conventional upscaling resolution targets and 3200x1800 is the lowest it recommends. This sees frame-rates rise to a 39.1fps average, but our base PS4 comparison point is still 65 per cent faster. However, 1440p gets closer. This is a 2.6x boost to resolution and the average comes in at 57.7fps - around 12 per cent off the pace set by the 900p 'original'.

The sense that 1440p may be the optimal sweet spot for the Polaris 10 GPU is strengthened by our Street Fighter 5 testing, where we play back the same replay across multiple resolutions on our Polaris 10 set-up and at straight 1080p on the R7 265-powered PS4 surrogate. Medium settings is a direct match for the PS4 version here and not surprisingly, our base-level PS4 hardware runs it very closely to the console we're seeking to mimic. What's interesting here is that SF5 doesn't drop frames, it slows down instead. Polaris 10 at 1440p keeps pace, but 1800p sees a 12 second lag on replay completion rising to 54 seconds at full 4K.

All the evidence collated thus far does indeed suggest that the Polaris 10 processor, pared back to Neo clocks has 1440p as the rendering sweet-spot. And that's great, but not exactly revelatory. Sony's Neo documentation isn't keen on 1440p as a framebuffer target either as it's not a great fit for a 4K display - it's the equivalent of running 720p on a full HD screen, which doesn't make for an impressive presentation. So do we have a problem here - a fundamental mismatch between Neo's potential capabilities and 4K display tech? Perhaps, but perhaps not.

We benchmarked seven PC games at 1080p on console-equivalent settings or close to it, reducing texture quality where required to ensure that the R7 265's 2GB framebuffer wasn't a limiting factor. There are some disappointments but equally, there are some genuinely impressive results. Take the Witcher 3, for example. Our Novigrad City test run hits a 33.3fps average on the R7 265 PS4 target hardware - pretty much in line with console performance. However, the same test run on Polaris 10 at Neo spec sees a huge jump in 1440p performance, and just a six per cent drop compared to the R7 265 run at 1800p. 4K remains off the table, but 3200x1800 is essentially the 4K equivalent to a 900p upscale for full HD displays.

Rise of the Tomb Raider also sees a good result. At 1800p, we're about 14 per cent off the pace set by our base-level PS4 equivalent hardware running at 1080p, but crucially, we're still above 30fps. However, there's little evidence that demanding triple A games will deliver a native 4K experience on Neo-level hardware, and some of the 1440p results (representing a 77 per cent pixel count increase over 1080p) are disappointingly low, suggesting that maybe we could do with more memory bandwidth. The rise from PS4's 176GB/s to Neo's 218GB/s clearly isn't scaling in line with the huge boost in GPU compute. But some results are showing genuine potential here, with that 2.3x increase in overall GPU power in theory allowing for something in the region of a 2x boost to base resolution.

| 1920x1080 (1080p) | PS4 Target 1080p | Neo Target 1080p | Neo Target 1440p | Neo Target 1800p | Neo Target 4K |

|---|---|---|---|---|---|

| The Witcher 3, Console Settings, Post-AA | 33.3 | 64.6 | 44.3 | 31.4 | 23.4 |

| Rainbow Six Siege, Console Settings, MSAA Upscale | 67.1 | 121.9 | 80.8 | 55.5 | 39.7 |

| Far Cry Primal, Very High, SMAA | 46.2 | 64.0 | 42.7 | 29.8 | 22.1 |

| Grand Theft Auto 5, Console Settings, Post-AA | 49.5 | 80.8 | 57.1 | 35.0 | 27.6 |

| Mirror's Edge Catalyst, Medium/High, FXAA High | 70.6 | 98.9 | 57.9 | 43.3 | 31.6 |

| Rise of the Tomb Raider, Console Settings, SMAA | 40.8 | 73.1 | 50.4 | 35.2 | 26.2 |

| Crysis 3, High, SMAA T2X | 50.9 | 89.2 | 55.9 | 36.1 | 26.7 |

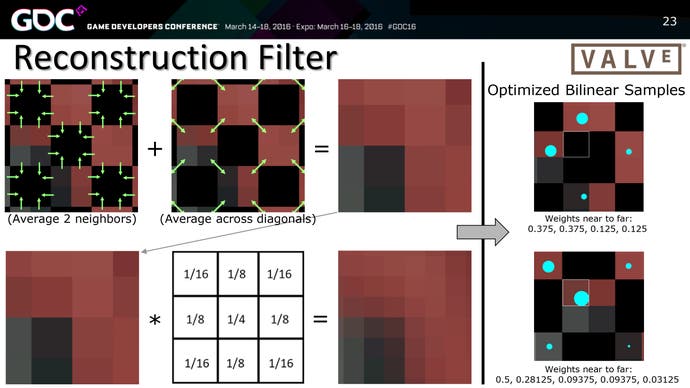

And that 2x increase over standard 1080p could prove crucial. In addition to recommending that developers experiment with standard upscaling, Sony is also talking about cutting-edge forms of pixel reconstruction - in particular what it refers to as the 2x2 checkerboard. It's a new one on us, but eventually we found what looks to be a match in a GDC talk called Advancing VR Rendering Performance, presented by Valve's Alex Vlachos. It's a presentation well worth checking out as it reveals a range of clever optimisations used in getting decent VR performance from low-end GPU hardware, but it also discusses the 2x2 checkerboard technique.

In essence, the GPU uses post-processing techniques to extrapolate a 4x4 pixel block from native 2x2 rendering. In theory, this should produce a decent 4K image while requiring just a 2x 1080p pixel count (around a 2688x1512 native framebuffer, if you like). We've not seen the technique in action before, but Sony mentions it several times in its documentation so we should assume that its R&D masterminds believe it can produce pleasing results on a 4K screen.

Upscaling is rarely, if ever, a match for the clarity of a native resolution framebuffer, but quite aside from 900p working out quite nicely this generation, we have seen some evidence of some truly impressive upscaling techniques. For example, did you know that Ubisoft's Rainbow Six Siege on PS4 upscales a 960x540 base image? And the Xbox One version is even lower at 800x450. In motion, it looks great.

Ubisoft is using dual techniques here, utilising 2x MSAA in combination with a temporal anti-aliasing technique to quadruple output resolution. It's an absolutely fascinating upscaling algorithm - and the great news is that we can enable and disable the technique on the PC version and run it at any resolution we want. Benchmarking Polaris 10 at Neo clocks at 3200x1800, we're still some way off the pace compared to the older Radeon running at 1080p (though curiously mid-way through the bench, you'll note that performance equalises) but when we re-run the sequence without the MSAA upscale in effect, frame-rates nose dive. In this case, the upscale technique is increasing performance by 55 per cent.

But what about the quality? Well, there is a slight softness in motion and hard aliased edges have an interesting dither pattern, reminiscent of a clutch of old PS3 titles that used MSAA upscaling. But the overall quality level holds up very well indeed and it's no surprise to see that Ubisoft opted for this technique as the default across all versions of Rainbow Six Siege. In and of itself, it does not solve the challenge facing Neo developers in producing a good-looking 4K presentation, but it shows fascinating results that could bear fruit further into the future. At the base level, a 1080p framebuffer with 2x MSAA clearly puts far less stress on the GPU than a straight 3840x2160 resolution.

The overall takeaway from our testing is clear, however. While we can expect to see some native 4K titles on PlayStation Neo, the fact is that cutting-edge triple A titles are unlikely to hit the same target. At the base level, a 2.3x improvement in compute can't satisfy a 4x boost to resolution - and that doesn't factor in other limiting factors, such as a relatively small boost to memory bandwidth, and the lack of a 4x increase to pixel fill-rate with the new hardware. It may take some time, but we could get some good results though innovative upscaling.

Alternatively, it may well be the case that developers simply use the opportunity to push Neo in other directions. In the benchmark table above we've included 1080p metrics for Polaris 10 running at Neo clocks. We've not concentrated on the results so much here because scalability varies - generally speaking, if you push frame-rates high enough, you will hit the brick wall that is AMD's DX11 driver overhead (even with an overclocked i7). Neo's challenge here will be different - a straight lack of CPU power by comparison. Some of the scalability results are encouraging though, a 60fps upgrade for capped 30fps titles looks viable in many cases, and the metrics also demonstrate that a Polaris 10-powered Neo will be very useful for VR.

And there's another aspect worthy of consideration: an improved 1080p experience, which is what the lion's share of the core actually want, based on the reaction to our article on whether 4K really is the best use of the next-gen consoles' specs. And so we went back to Star Wars Battlefront, and ran it at a straight 1080p at ultra settings. The result was remarkable in that console settings at 900p on the R7 265 still handed in a five per cent performance advantage - testament to just how much of a computational load ultra settings has these days, but performance could be equalised easily enough - pro-tip: you rarely ever need ultra quality shadows.

And the test is rather apt, actually. While Sony is keen on developers getting the most out of 4K displays, the fact is that the minimum required resolution that game makers must support is good old 1080p. There's nothing to stop the likes of DICE simply using the Neo spec to cut out 900p upscaling, then ramping up the quality presets. And for multiplatform developers in particular, this could produce the most crowd-pleasing results - improved visual quality, native resolution full HD support and stablised performance. Bearing in mind that the tech is already baked into their engines, it may well be the easiest way to leverage the technology in the short term.