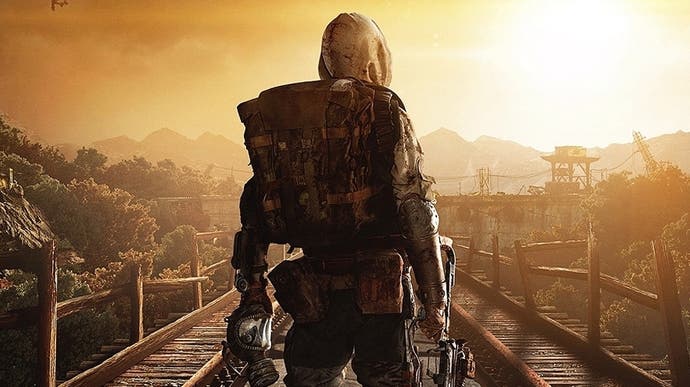

The Making of Metro Exodus Enhanced Edition

A Digital Foundry tech interview.

Metro Exodus's Enhanced Edition is a very important game - it's the first triple-A game we know of that's built on technology that demands the inclusion of hardware-accelerated ray tracing hardware. To be clear, the new Metro is not a fully path-traced game built entirely on RT, but rather a hybrid renderer where global illumination, lighting and shadows are handled by ray tracing, while other elements of the game still use traditional rasterisation techniques. The bottom line though is that this is the foundation for developer 4A Games going forward: its games will require a PC with hardware RT graphics capabilities, while their console versions will tap into the same acceleration features found on the ninth generation consoles. And while 4A is the first developer to push this far into next generation graphics features, it's clearly not going to be the last.

We've already reviewed the PC version of Metro Exodus Enhanced Edition and will be following up in due course with detailed analysis of the PS5, Xbox Series X and Series S renditions of the game. However, in putting together our initial coverage of the game, 4A Games were extremely helpful and collaborative in ensuring the depth and accuracy of our work. If you've seen our video breakdown of the title, you'll have seen the behind the scenes editor shots showing level design workflow before and after the transition to ray tracing - but that's just the tip of the iceberg. 4A were also very helpful in going deep - really deep - in explaining how their RT implementation works. On top of that, the developer gave us an excellent overview of the project: why it was time to move their engine to RT, how so many new technologies made their way into Metro Exodus Enhanced Edition, and why we need to wait a little while until the console versions are released.

It's a wealth of in-depth information we're going to be splitting into two deep-dive interviews. This week, we begin with a discussion with Metro Exodus executive producer, Jon Bloch, covering the general approach to developing the game and its new features. Next week, we're catering to the hardcore graphics audience as 4A's CTO Oles Shishkovstov and senior rendering programmer Ben Archard go into extreme depth on the development of the 4A Engine's brand new ray tracing features.

Exclusive: Metro Exodus Enhanced Edition Analysis - The First Triple-A Game Built Around Ray Tracing

Exclusive: Metro Exodus Enhanced Edition Analysis - The First Triple-A Game Built Around Ray Tracing

Digital Foundry: What was the time frame and decision-making process about updating Metro Exodus on PC with the Enhanced Edition?

Jon Bloch: It kind of came to be when we were briefed in on the Gen 9 consoles supporting ray tracing. We knew that was going to be a cool opportunity to bring our RTGI tech to consoles, and in the process overhaul the systems further. And if we were doing that for console, we also had to take advantage of newer GPUs and expanded RT support in the PC space. We started planning it and looking at the systems we had already started to upgrade for our future titles, figured out what we could get done in time, and just started working on it - it was a pretty easy and unanimous decision for us and Deep Silver that this was a great opportunity to make Exodus even better and to bring some really cool upgrades to all our fans, not only the console ones. Work for this upgrade touched nearly all departments, but mostly programming. Artists needed to go back through and remove fake lights, polish and re-balance scenes, designers needed to make sure systems that rely on lighting like stealth still worked. This was not a fast or small undertaking, but we knew we had to do it as the opportunity was too great.

Digital Foundry: The switch to real-time ray tracing for so much of the game's lighting is programmatically and visually profound, what were the production effects of this switch for development/artists?

Jon Bloch: One part of it is the response rate of the environment. If you have to bake lights or really run any heavy update process, there is going to be some amount of wait time to see the result of even a minor adjustment. This is the iteration period. The iteration period can make or break the entire development process. Around about a second, maybe a few seconds is fine: people can live with that. If it gets a bit longer, if there is maybe five to 10 seconds wait on something, people start getting afraid to experiment and they might miss out on cool discoveries that can really add something to the game. Any longer than that and you are into 'getting up and going for a coffee' territory and the whole thing collapses. Ideally you want to see the results of your changes instantly. That's why we have put so much focus on ensuring that every graphical feature we have can be achieved in real-time.

Another thing is that there is less placing of fake lights. Often a stylistic decision would have required a lighting artist to set up maybe a soft spotlight that had no real source, or even a straight up region of ambient light. These are generally done to achieve the effect of natural light propagation. Lights do that automatically now: they bounce around corners and (because of DDGI) they gradually work their way into dark recesses without any access to direct illumination. So, a lot of that work can now be done with just the basic light sources on their own, and more realistically so at that.

And a third effect, in terms of actual work done on this project was that artists went through and removed/rebalanced everything. Ray tracing in the first launch of Metro Exodus on PC made a lot of places darker than they should have been, especially interiors or the tunnels where there was no natural sunlight. Now that pretty much every light in the scene (technically only the shadowed ones) can add to the GI, the effect is to have more light than would have been there in the original. So, we could remove some fake lights and tone down or even remove 'real' lights where appropriate.

Digital Foundry: Metro Exodus has some rather large levels with a lot of art content - what was the approach to updating it all?

Jon Bloch: Most of the art content remains the same beyond shipping some textures as 4K versions. This update focuses primarily on improving the underlying engine technology and applying that new technology to the pre-existing content. The main body of work was involved in going through and rebalancing the lighting setup for every environment to try to match the look and feel of the original (non-RT) release, only with a significantly different underlying system. We use the fire particles, which were introduced with the flame thrower in The Two Colonels DLC, for all fire instances now. So, there was some work involved in going through and changing particle system assets. There was also some reworking of a few of the generic light prefabs to turn them into area lights, but these get reused a lot throughout the game.

Digital Foundry: Exodus has two sandbox open world-lite sections in its campaign (Caspian, Volga) alongside traditional more linear sections (Yamantau, Dead City, the Metro, Two Colonels), the DLC expands on this in Sam's Story. Where do you see the series developing in the future between the public response between these two gameplay types?

In Metro Exodus we introduced those new sandbox style levels and they were a big success, however the classic Metro tunnel style gameplay from the previous games made fewer appearances, and both our team and fans noticed that. Rest assured we definitely want to keep both and have a goal to make sure that we balance them better in future instalments while still expanding on our ability to provide richer and more complex environments.

Digital Foundry: Exodus takes advantage of a lot of next generation features, DLSS, VRS, DXR. From a production standpoint, how do you prioritise which tech features need to be included, delayed, or cut to release on time?

Jon Bloch: As far as DXR was concerned we went all-in with unfettered enthusiasm as soon as we realized we could. It really is the future, and with the introduction of support to Gen 9 consoles, it has proven that the industry is ready to commit. It takes people adopting new tech like this for the wider community to buy in, and as made apparent by a handful of our colleagues at other studios, we're not alone in this one. We might be taking some risk to dive in so fully, and we might be the first game to require ray tracing support (are we?) but we think the benefits outweigh that, and not just for the development process, but for gamers as well.

With DLSS, the actual implementation was a lot quicker than something like ray tracing. It's still not as simple as 'add a couple of lines of code and it works' - there was still a lot of integration into our post-process pipeline, especially with TAA, but it is still generally quite a plug-and-play system and so you aren't building it all from the ground up. There were also requests from the community and Nvidia to implement the latest version so that had a big influence on the decision-making process. The differences between DLSS 1.0 and 2.0 were significant enough however, that we needed to choose the right time to upgrade alongside all the other work and platform additions we've been doing this past two years. DLSS 2.0 works differently and we had to make some further pipeline changes to be compatible, so it wasn't a straightforward SDK version upgrade. It made sense to bundle it in with these other upgrades and worked for our schedule as well.

For VRS, Shader Model 6.5, 16-bit FPP [floating point precision] and the sub-features of ray tracing like DDGI, reflections, and multiple light sources it is a balance of how much we need something to get the game working, how much implementation time is required, and how much of an efficiency boost (or cost) it will give us. Often the latest shader model will ship features that we just won't be able implement other features without. FPP16 was largely a case of changing type names where applicable so it took a decent amount of time to go through and do it, but it was low risk. We use VRS on our forward (transparent) effects and it basically just replaced something that we were already using an MSAA-based solution for, so it wasn't a huge amount of work even though it was for a minor boost to one part of the pipeline. DDGI took months to implement and balance, but it was the only option for secondary GI, and it is visible throughout the whole game, so it had to get bumped up the list. Reflections are really the main thing that had to be adjustable priority-wise for time concerns. They are one of the most expensive features out there; in order to really do them justice we are going to need to completely overhaul a lot of additional supporting features beyond just lighting; and ultimately, they just come second to GI in terms of importance to our game.

Digital Foundry: 4A has seemed like a PC development studio from the beginning even though it puts out excellent console releases. What is the basis and reason for this heavy PC focus?

Jon Bloch: As you say it's a focus, and certainly from a tech standpoint we can agree. However, our engine is built to be multi-platform and we develop multi-platform. Gameplay-wise there is no primary and there are no ports. Tech wise, we do focus on PC while still also trying to take advantage of the power and features of consoles. Even with the advantages that our PC releases have over consoles, believe it or not there are some things that we can also only do on consoles, but not on PCs. That being said, if you want to do cutting edge, you do it on PC first. PC tech moves fast, where consoles stick around for a few years. That's good for stability and understanding what you are working towards with known technology or with smaller advances.

Gen 9 consoles are an insane achievement in their own right and their timing could not have been better for us to mature our RT tech and bring it to more of our fans, but also to pave the way for how we build our future titles. For a while it was a real concern that they wouldn't be able to do ray tracing at all at a decent price point so there were fears of having to support two completely different classes of technology in tandem. They did, and it's invaluable to us that we now know we can build towards an RT-based future. Despite that, the same stability I mentioned above can't match the flexibility you have to innovate and experiment on PC. The second factor is ease especially when it comes to things like iterative development. It's easier to lead on PC because you work on PC.

Digital Foundry: 4A ventured into VR with the release of Arktika.1 - what were lessons learned from that project that were carried over into how 4A works today?

Jon Bloch: I think a lot of the optimisation work we did carries over pretty well. Knowing what to look out for and what can slow down a frame in VR can slow down a frame anywhere. This was generally little things, like being aware of the cost of each element of the scene, being aware of how you are positioning lights, so you don't eat up milliseconds with shadow renders, understanding how much each part of your pipeline costs and budget for it accordingly. It seems like obvious considerations and it is the sort of thing that you learn from regular game development too, but the hard 90fps demand that VR imposes on you makes you a lot more conscious of that sort of thing than you are when you work to 30fps or 60fps. In VR you need to be a lot more aggressive when it comes to feature creep that can occasionally push you under your limit. We have been a lot more aggressive in trying to make sure we achieve 60fps with this update with consoles and target PC hardware.

We did also manage to port back some of our shaders. The burning effects in The Two Colonels, for example, are based on one of dissolving weapon effects in Arktika.1. Beyond that though, Arktika.1 used the same engine as Exodus, although branched halfway through development as we released in 2017, but the bulk of lessons learnt were very much about what you can and can't do in VR games specifically. Transparent billboard effects look different in VR. Offscreen render targets are way too expensive and we were insane to use them in VR. You have far less control of where the camera goes in VR, so everything needs to be as high detail as possible, and you can't just clip areas the camera won't see. You can cheat a whole lot less in VR. VR is very much its own beast.

Digital Foundry: We are seeing a staggered release here for Exodus, PC first, consoles later - what are some of the reasons for this?

Jon Bloch: Most of it is timing, we're ready with the PC version now and we didn't want to hold it back. Consoles take time to go through certifications, manufacturing, etc. Since we finished them both around the same time and we don't have that extra publishing time to worry about, we figured we'd not make our PC fans wait any longer than they needed to. Normally we'd do things a bit differently for a full new release, where you focus on consoles first, get them to RTM, and then finalise PC while all that is happening, but things worked differently with scheduling this time so we've taken advantage of it. Big, global, multiplatform releases are often done all at the same time for market reasons - you want all purchase options 'on the shelf' at the same time so that everyone can get what they want on the same day. This is a free update to all existing Metro Exodus owners, so we were thankfully unbound by those traditional restraints.

.png?width=291&height=164&fit=crop&quality=80&format=jpg&auto=webp)