Google Stadia specs: is this our first taste of next-gen?

Vastly improved CPU power, 10.7TF graphics - and unique cloud features.

With the announcement of Stadia, we're off the blocks. The first next-gen platform has been revealed and while Google isn't going into too much depth about specs, we know enough to paint a compelling picture of the new system's capabilities. In terms of its potential performance, there are comparisons points with the consoles to come from Sony and Microsoft, but at the same time, the whole nature of the enterprise is a massive step beyond what is possible not just from the consoles of the here and now, but even future boxes too.

And this is the thing: when we analyse the specifications of a new piece of hardware, expectations need to be offset against reality. Fundamentally, a console has to be built with a reasonable per-unit cost in mind, meaning that you will never get the absolute state-of-the-art. Bang for buck is king. It also needs to deliver excellent performance within a small form factor, meaning it can't be too powerful - PlayStations and Xboxes have very tight thermal windows, after all.

Stadia's cloud-based nature removes some key limitations. Build cost is less of an issue because Google isn't building a box for every user, while the standard server 'blade' form factor opens up the thermal window significantly. For example, Stadia uses a discrete server-class CPU and a separate AMD GPU, rather than the all-in-one system-on-chip we're likely to see in next-gen Xbox and PlayStation consoles. It's more expensive and trickier to keep cool, but it's standard form for GPU-equipped cloud servers.

From a hardware perspective, Stadia out-specs every console on the market right now, but there are two key compromises. Firstly, audio-visuals are compressed, meaning an inevitable loss of quality. Secondly, beaming your inputs to the cloud, to be processed and returned to the user takes time. On these two matters, we have been hands-on with the latest iteration of the Stadia technology and can provide you with some data, but first, let's break down everything we know about the system.

Google's Stadia specs

Google has released the following data for Stadia. It's a curious mixture of data points, combining the kind of minutiae rarely released on some components along with notable omissions elsewhere, such as the amount of cores/threads available for developers on the CPU. Regardless, it paints a picture of a highly capable system, clearly more powerful than both the base and enhanced consoles of the moment.

- Custom 2.7GHz hyper-threaded x86 CPU with AVX2 SIMD and 9.5MB L2+L3 cache

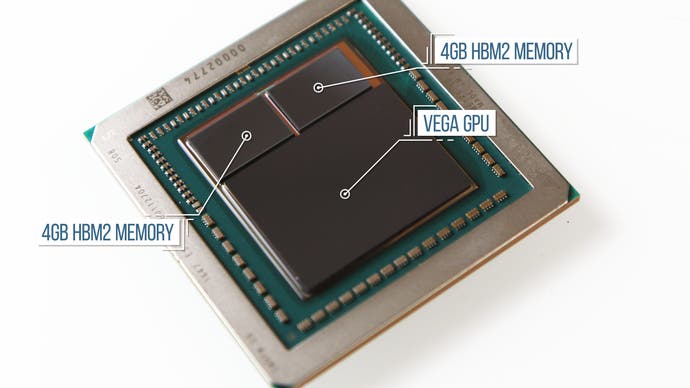

- Custom AMD GPU with HBM2 memory and 56 compute units, capable of 10.7 teraflops

- 16GB of RAM with up to 484GB/s of performance

- SSD cloud storage

Google says that this hardware can be stacked, that CPU and GPU compute is 'elastic', so multiple instances of this hardware can be used to create more ambitious games. The firm also refers to this configuration as its 'first-gen' system, the idea being that datacentre hardware will evolve over time with no user-side upgrades required. Right now, it's not clear if the 16GB of memory is for the whole system, or for GPU VRAM only. However, the bandwidth confirmed is a 100 per cent match for the HBM2 used on AMD's RX Vega 56 graphics card.

CPU processing power: a generational leap over current-gen

There's no specific confirmation of who is supplying the custom CPU to Google for this project, but it is confirmed to be operating at 2.7GHz. The configuration is unlike anything offered we've seen so far from AMD, perhps suggesting another, very prominent vendor - and Google has also confirmed to us that the CPU does not sit on the same package as the GPU. Immediately we know that the Stadia set-up is very, very different to what we should expect from the next-gen systems in development by Sony and Microsoft, where we expect to see Ryzen cores integrated on the same silicon as the GPU and memory controllers.

In speaking to Google VP Majd Bakar, he stressed the custom nature of the processor. The firm isn't saying at this time how many cores or threads are available to developers other than Phil Harrison suggesting that it is 'a lot', who also tells us that the CPU is server-class. Suffice to say that any kind of modern, many-core CPU will offer a true generational leap in processing power over today's consoles, while the system - based on Linux - shouldn't have to contend with the 'bloat' associated with running the Windows OS on a home PC system.

Graphics: Custom AMD graphics core rated at 10.7 teraflops

Google has collaborated with AMD to deliver a custom graphics core for the Stadia project. Again, details on the architectural make-up of the GPU have not been disclosed, but 10.7 teraflops of compute has been confirmed, delivered via 56 compute units. Based on those numbers, Stadia's GPU core will be clocked at 1495MHz or in that ballpark. Cloud server GPUs can be virtualised, their resources spread between multiple users - but Google has told us that this does not happen on the Stadia set-up, meaning the full 10.7TF per player instance.

When asked whether Stadia employs the Vega architecture or the upcoming - and extremely mysterious - Navi, Google would not comment. What we can say is that the Project Stream tech demo carried out at the end of last year, stretching into 2019 was carried out on Stadia hardware within Google's datacentres. This would indicate that the final hardware was good to go some time before that. Also, perhaps it is entirely coincidental, but Crytek released a real-time ray tracing demo last week, running without RT acceleration on an RX Vega 56, which (as mentioned) is the closest consumer equivalent to Stadia's GPU - the same number of CUs, similar clocks and also using HBM2 memory.

Regardless of whether the Stadia GPU is based on Vega or something more advanced, the processor will inevitably have many advantages over the current generation of consoles. In terms of raw compute, there's an additional 78 per cent of throughput compared to the Scorpio Engine in Xbox One X, and a 5.8x improvement compared to the base PlayStation 4. However, compute is only one aspect of how capable a GPU is. The Stadia processor also stands to benefit from years' worth of AMD architectural improvements and any custom features Google itself may have added to the design.

Google has also demonstrated scalability on the graphics side, with a demonstration of three of the AMD GPUs running in concert. Its stated aim is to remove as many of the limiting factors impacting game-makers as possible, and with that in mind, the option is there for developers to scale projects across multiple cloud units:

"The way that we describe what we are is a new generation because it's purpose-built for the 21st century," says Google's Phil Harrison. "It does not have any of the hallmarks of a legacy system. It is not a discrete device in the cloud. It is an elastic compute in the cloud and that allows developers to use an unprecedented amount of compute in support of their games, both on CPU and GPU, but also particularly around multiplayer."

Memory: 16GB of HBM2 memory, with 484GB/s of bandwidth

Google says that the Stadia client set-up has HBM2 memory - 16GB in total, which is shared between the CPU and GPU. This suggests a tight integration of the CPU and GPU, but the firm has also said that these components are not integrated in a single chip, as is the case on current-gen consoles (and we suspect the next-gen ones too).

The HBM2 memory is rated for 484GB/s of bandwidth, which is identical to the throughput of the AMD Radeon RX Vega 56, which uses a wide 2048-bit memory interface with the HBM2 memory running at 800MHz. Further specs on Stadia's memory set-up should prove fascinating if they are released further on down the line, but this set-up of sharing HBM2 across CPU and GPU is certainly the first example we've come across.

Storage and infrastructure: the cloud advantage

Because of its server-based design, Stadia has potentially huge advantages over home consoles and PC. Google's objective for game loading times is to boot any game in five seconds, and this will inevitably extend to in-game loading too. For developers, the need to create games within the 50GB/100GB constraints of the Blu-ray disc has now been completely removed. On top of this, hosting hardware in the cloud presents fundamental advantages to developers that could be game-changing, especially for multiplayer games and persistent worlds.

In a standard multiplayer game using a dedicated server, the client software operates on your local machine, which has only has a very narrow window of bandwidth to the server. This limits the level of communication, and by extension, the level of sophistication in multiplayer games. With Stadia, the 'client' running the game experience is effectively a peer of the server, running on the same network with a high bandwidth interconnect. This could lead to massive improvements in player count, world simulation quality and physics. Cheating within a multiplayer game is also far more difficult if the user has zero access to the client-side code.

In a world where console power is often tied to the capabilities of the CPU and GPU, I think it's important to stress how important these advantages are. Fundamentally, while next-gen consoles will no doubt produce some very special experiences, removing storage limits and bringing clients and servers closer together could dramatically change the kinds of games we play. It's a true generational leap that any new local-based next-gen console can't deliver - but making the most of this opportunity will rely on developers exploiting those capabilities, which is by no means certain in a world dominated by multi-platform development. The pitch certainly sounds full of potential though, with Google describing that multiplayer titles in particular are currently limited by the very nature of running code natively in a local box, far away from the dedicated server - if there is one at all.

Phil Harrison: "In our platform, the client and the server are inside the same architecture and so whereas historically you'd be talking about milliseconds of ping times between client and server, in our architecture you're talking about microseconds in some cases and so that allows us to scale up in a very dramatic way the numbers of players that can be combined in a single instance and obviously the go-to example would be battle royale going from hundreds to players to thousands of players or even tens of thousands of players. Whether that's actually fun or not is a different debate but technologically that is just a headline-grabbing number that you can imagine."

And being a cloud server, other advantages are delivered that a traditional console cannot match. The fast loading times are only possible with a state of the art solid-state storage solution - too expensive for home consoles built to a price. On top of that is the virtual elimination of storage limits in the cloud, with Google telling us that there's access to petabytes of storage for game-makers (one petabyte is 1024TB). For gamers, one of the most significant advantages of Google's cloud infrastructure will be that, as the system is located in the cloud, you will never experience 'friction' in the game experience: system software updates, game patches and lengthy installs are all taken care of on the cloud and invisible to the user, who should get an entirely seamless experience.

Google Stadia - the first next-gen gaming system?

As always, it's the games that matter and based on what we've seen, plus the GDC keynote demos, there's the sense that Google is still keeping a lot of its powder dry. What we do know is that developers have a new mechanism for delivering games that has both strengths and weaknesses. As a cloud delivery system, latency can't be completely eliminated, while a compressed image with a bandwidth limit will lack the pristine edge offered by a local digital video connection. High-speed, fast action content may exhibit macroblocking artefacts - we've taken an updated look at Google's streaming technology and assessed its controller in a separate article.

These should be offset against the advantages, which are very exciting indeed. First of all, the quality of life advantages should potentially return us to what console gaming should be about - instant, plug-and-play (or rather click-to-play) gaming and very short loading times. With the choice of CPU, we're looking at a vast increase in processing power, capable of realising richer, deeper worlds and more advanced simulation. The GPU doubles the amount of memory compared to the PS4, even before system RAM is factored in, while graphics power, in terms of compute at least, is a substantial leap over today's standard - the PlayStation 4. On top of that, Google is looking to stack client hardware in order to brute-force its way to even higher performance.

Meanwhile, the possibilities for multiplayer gaming are tremendous, with the ability for the traditional server and client to be more closely integrated than we've ever seen before. In terms of storage and multiplayer potential, the advantages offered by a cloud system effortlessly outpace what any local system built to a set per-unit cost will deliver. The biggest outstanding question is simple - in a world of multi-platform development, will developers exercise the infrastructure advantages that Stadia delivers? In our interview with Google, Phil Harrison seems highly optimistic about third-party support, and it'll be interesting to see how - or if - developers utilise the cloud-based strengths of the platform in a world where multiplayer is still built around local hardware, and where the momentum is actually towards connecting all systems, regardless of platform.

But if gaming does gravitate towards what Google describes as a 'cloud native' model, there are reasons to be excited, because we may well have a compelling answer to a very simple question - what is next-gen? Over and above faster hardware, what will the next generation of gaming platforms deliver that the current consoles can't? More pixels won't cut it - there needs to be a vision, and with the reveal of Stadia, we have our first clear look at a potential future for gaming.